And across the continent’s highways and desert roads, another migration gathers – this one made not of birds or fish, but of humans.

They go by many names: nomads, drifters, snowbirds, boondockers, van dwellers. Some travel in search of warmth, others for freedom and community. And for a growing number, the migration is not simply seasonal but economic.

Among those is 55-year-old Derek Hansler, a chef by trade.

Known to friends as D Rock, he spends the summer in New Hampshire visiting his children and grandchildren, parking his 2003 Van Terra shuttle bus in driveways along the way. He picks up gigs when he needs cash or a place to park, but the season is less work than service, volunteering in the communities he revisits every year.

“New Hampshire tells me when it’s time to roll,” he jokes. He likes to stay until the leaves turn crimson, then leave before they fall. When that moment arrives, he says goodbye to his family and points his bus 3,300 miles (5,310km) to the south-west.

In Seattle, as the rainy maritime chill brings out jackets, Stephanie Scruggs and Gustavo Costo prepare to head south. After three years on the road, they recently decided to move in together – a milestone in their nomadic life that meant trading their two vans for a half-finished bus they named Magpie, a weathered 1999 International Thomas.

It’s been more than five years since Scruggs, then 35, was diagnosed with a rare and aggressive brain cancer known as a grade three anaplastic astrocytoma. After surgery, six weeks of radiation, and a year of chemo, doctors told her she might have two to five years to live.

Retiree Theresa Webster makes a final pass through the Oregon campground where she volunteers each year as a summer host. Fire rings are doused. Bathrooms are scrubbed. Trash is gathered and hauled away.

In return for the work, she has been given what has become increasingly rare: a legal place to park.

With the season over, she packs up Old Yeller, the mustard yellow 1977 Dodge van she bought for $3,000. Her dog, Miles, rides shotgun as she takes the long way south, first turning east toward her son’s driveway in Iowa, folding briefly back into the family rhythms of grandkids and shared meals. When winter presses in, she points Old Yeller down the interstate.

In driveways, campgrounds, and borrowed corners of parking lots, autumn departures like these unfold across North America. Soon these migrants will spill on to back roads, highways and interstates, license plates tracing faint lines south from Alaska, Quebec, Maine and everywhere in between, navigating by a kind of winter constellation – an invisible beacon in the American southwest that most maps barely notice, a place they return to year after year.

A small desert outpost called Quartzsite, Arizona.

*****

For many road trippers speeding along Interstate 10, Quartzsite, or “Q-town” as it is affectionately known, appears little more than a gas station and fast-food stopover halfway between Los Angeles and Phoenix. It sits in the northern reaches of the Sonoran Desert, 20 miles east of the Colorado River.

Summertime temperatures hover in the triple digits, sending the valley’s human residents indoors to air-conditioned rooms and its wild inhabitants – including desert tortoises, cottontails and kangaroo rats – into underground lairs.

According to the 2020 census, the population is 2,413.

But as winter approaches and temperatures fall to something more forgiving, the great migration of motorhomes, RVs, buses, trailers, vans, cars and trucks begins to pour into Quartzsite – and more precisely, into the vast stretches of open desert that surround it.

But not everyone keeps moving.

Tens of thousands instead gather inside BLM-designated long-term visitor areas, or LTVAs, seasonal enclaves established in 1983 to accommodate the growing number of people wintering in the desert. Seven LTVAs stretch across Arizona and California. But the largest of these and the center of gravity is La Posa – Spanish for “the resting place” – an 11,400-acre stretch of land on the outskirts of Quartzsite.

Each winter, a vibrant social world takes hold. Clubs form and dissolve – singles groups, quilters, metal-detecting hobbyists – while daily gatherings emerge at sunrise and continue late into the night. Around them, infrastructure hums into being: laundromats that double as showers, RVs converted into hair salons, swap meets, mail-forwarding counters for lives without fixed addresses, mechanics coaxing life from failing engines.

Theresa remembers arriving in Old Yeller for the first time in 2018. She had kept her apartment in Oregon just in case van life didn’t work out. But as the desert opened around her, the contingency plan dissolved.

“This is it,” she remembers thinking. “This is the life.” She had grown tired of paying rent and bills and having nothing left over – a treadmill she could never step off. Out here, there were no landlords to answer to. Eight years later, the desert around Quartzsite still carries that weight for her. “It has a magical feeling,” she said.

Community and infrastructure move in tandem here, creating a seasonal metropolis layered on to the existing town. But what allows it to function year after year is something more fundamental: affordability.

For $180, a permit allows camping from 15 September through 15 April. At La Posa, that price includes trash collection, vault toilets and a dump station. It’s worth pausing on the math. For less than the cost of a single night in many American hotels, a person can legally live on public lands in the desert for seven months.

Many LTVA visitors are traditional snowbirds: retirees who maintain homes elsewhere and migrate seasonally for warmth. But for a growing number of others, the permit functions differently: as a legal foothold in a housing system that has increasingly shut them out. [...]

Dr Graham Pruss, executive director of the National Vehicle Residency Coalition – a network that advocates for the rights of people living in vehicles – spends part of each winter moving between desert camps as he connects with vehicle residents across the country. He sees many of them as part of what he calls an “economic refugee class.” They are people displaced not by conflict or famine, he said, but by rents, wages and the shrinking availability of stable housing.

He describes what he calls “settlement bias” – our tendency to treat familiar forms of dwelling as legitimate and unfamiliar ones as suspect.

“If you park an RV on to a private space and you pay for rent, that’s called a mobile home park,” he said. “But if you move that RV 100 feet onto the street, we call that homelessness.

“These are people who are using their private property to solve a housing crisis that we all see around us,” he added. “That adaptive strategy is innovative. It creates solutions where they don’t exist.”

For many vehicle residents, public lands have become one of the few legal geographies where long-term habitation remains possible.

“Public lands are the lifeline for a lot of us,” said Mary Feuer, a longtime public land resident. “When the money runs out, they literally support us.”

by Joshua Jackson, Re:Public | Read more:

Summertime temperatures hover in the triple digits, sending the valley’s human residents indoors to air-conditioned rooms and its wild inhabitants – including desert tortoises, cottontails and kangaroo rats – into underground lairs.

According to the 2020 census, the population is 2,413.

But as winter approaches and temperatures fall to something more forgiving, the great migration of motorhomes, RVs, buses, trailers, vans, cars and trucks begins to pour into Quartzsite – and more precisely, into the vast stretches of open desert that surround it.

But not everyone keeps moving.

Tens of thousands instead gather inside BLM-designated long-term visitor areas, or LTVAs, seasonal enclaves established in 1983 to accommodate the growing number of people wintering in the desert. Seven LTVAs stretch across Arizona and California. But the largest of these and the center of gravity is La Posa – Spanish for “the resting place” – an 11,400-acre stretch of land on the outskirts of Quartzsite.

Each winter, a vibrant social world takes hold. Clubs form and dissolve – singles groups, quilters, metal-detecting hobbyists – while daily gatherings emerge at sunrise and continue late into the night. Around them, infrastructure hums into being: laundromats that double as showers, RVs converted into hair salons, swap meets, mail-forwarding counters for lives without fixed addresses, mechanics coaxing life from failing engines.

Theresa remembers arriving in Old Yeller for the first time in 2018. She had kept her apartment in Oregon just in case van life didn’t work out. But as the desert opened around her, the contingency plan dissolved.

“This is it,” she remembers thinking. “This is the life.” She had grown tired of paying rent and bills and having nothing left over – a treadmill she could never step off. Out here, there were no landlords to answer to. Eight years later, the desert around Quartzsite still carries that weight for her. “It has a magical feeling,” she said.

Community and infrastructure move in tandem here, creating a seasonal metropolis layered on to the existing town. But what allows it to function year after year is something more fundamental: affordability.

For $180, a permit allows camping from 15 September through 15 April. At La Posa, that price includes trash collection, vault toilets and a dump station. It’s worth pausing on the math. For less than the cost of a single night in many American hotels, a person can legally live on public lands in the desert for seven months.

Many LTVA visitors are traditional snowbirds: retirees who maintain homes elsewhere and migrate seasonally for warmth. But for a growing number of others, the permit functions differently: as a legal foothold in a housing system that has increasingly shut them out. [...]

Dr Graham Pruss, executive director of the National Vehicle Residency Coalition – a network that advocates for the rights of people living in vehicles – spends part of each winter moving between desert camps as he connects with vehicle residents across the country. He sees many of them as part of what he calls an “economic refugee class.” They are people displaced not by conflict or famine, he said, but by rents, wages and the shrinking availability of stable housing.

He describes what he calls “settlement bias” – our tendency to treat familiar forms of dwelling as legitimate and unfamiliar ones as suspect.

“If you park an RV on to a private space and you pay for rent, that’s called a mobile home park,” he said. “But if you move that RV 100 feet onto the street, we call that homelessness.

“These are people who are using their private property to solve a housing crisis that we all see around us,” he added. “That adaptive strategy is innovative. It creates solutions where they don’t exist.”

For many vehicle residents, public lands have become one of the few legal geographies where long-term habitation remains possible.

“Public lands are the lifeline for a lot of us,” said Mary Feuer, a longtime public land resident. “When the money runs out, they literally support us.”

by Joshua Jackson, Re:Public | Read more:

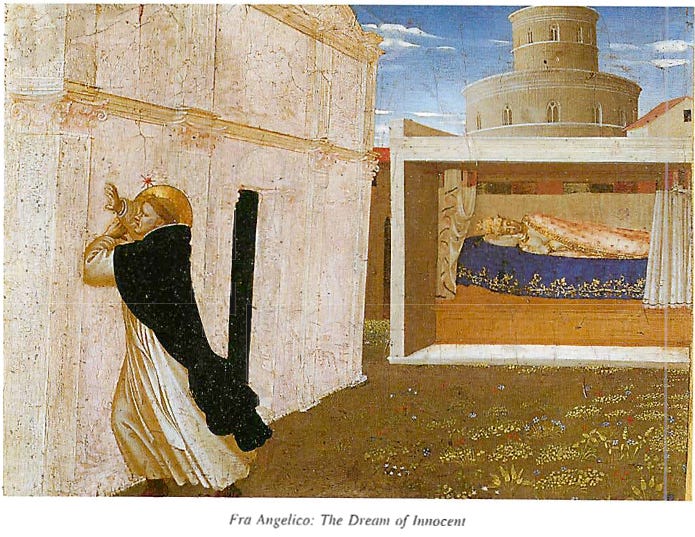

Image: Joshua Jackson