“No one in this body thinks the Senate is laser-focused on the most pressing issues facing the nation,” Sasse told his colleagues. “No one.”

The indictment was bipartisan, surgical, and delivered with the calm of a man who had considered it carefully before speaking. The Senate, he argued, had surrendered its institutional identity to the rhythms of the 24-hour news cycle, to the demand for sound bites, and to the incentive to grandstand for a narrow base and raise money rather than legislate for a country. “The people despise us all,” he said. “And why is this? Because we’re not doing our job.”

It served as a warning that went unheeded, and 11 years later, we’re watching more dysfunction in government than ever before. Sasse, now dying of Stage 4 pancreatic cancer at 54, is still saying the same thing. The diagnosis has not changed the message. It has sharpened it.

Whether Sasse was a “good” or “effective” senator is debatable. Whether Washington currently has enough senators like him is not a close question.

The criticism that followed him throughout his eight-year tenure is almost entirely subjective. His critics on the Left saw a man willing to deplore Trumpism in public while voting with President Donald Trump‘s agenda in practice. His critics on the Right, particularly as the party realigned, saw a posturing institutionalist more interested in making points and serving as a pundit than in getting on board fully with the president’s policies. The most durable version of this critique runs something like: He gave great speeches and passed no significant legislation.

Yuval Levin, founding editor of National Affairs and director of Social, Cultural, and Constitutional Studies at the American Enterprise Institute, largely rejects both sets of criticisms. On the Trump question specifically, Levin is direct: “The notion that there was much more he could have done to hold Trump to account is misdirected and mistaken. He took on Trump when he disagreed with him, and when he thought Trump had exceeded his authority or violated his oath. And unlike most Senate Republican critics of Trump, he ran for reelection and won after doing that.”

The objection to the lack of signature legislation mistakes the Senate’s function for a body it was never designed to be. In the framework Sasse spent years articulating, the Senate is not primarily a factory for producing legislation. It is a deliberative institution meant to apply friction to democratic impulses in the House of Representatives, to slow things down when people want to move too fast, and to force the executive and judiciary to operate within appropriate constitutional limits. By that standard, which is closer to the Founders’ intent than the one applied by Sasse’s critics, he understood and performed his role better than most of his colleagues.

The “pundit” critique oversimplifies his actual record. Sasse served on the Senate Intelligence Committee throughout his tenure, and his work on China there was substantive and largely ahead of the political mainstream. When it was still unfashionable for a Republican to identify Beijing as a generational geopolitical threat rather than an irritating trade partner, Sasse was making that case in the committee rooms that mattered. He had genuine expertise in China’s intelligence operations and, accordingly, used his position, spending considerable time in secure facilities at times when most of his colleagues were busier developing a social media strategy.

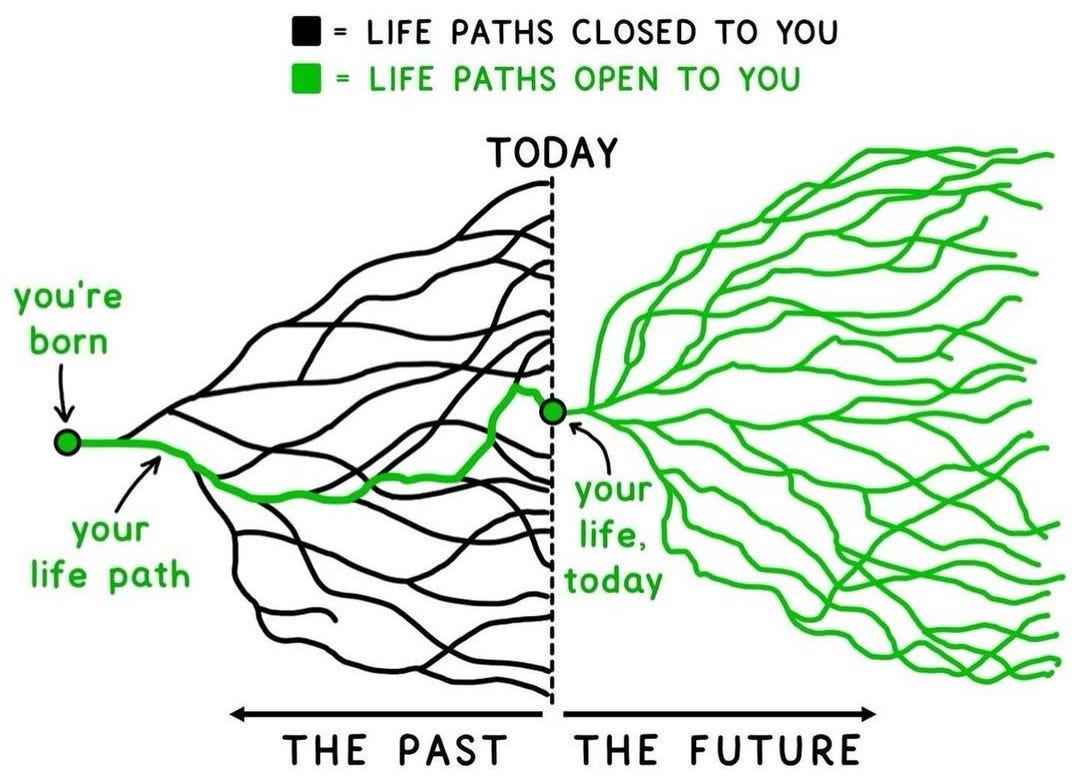

Sen. Mark Warner (D-VA), who worked alongside him on the intelligence committee, offered perhaps the most precise characterization of what made Sasse different, telling Scott Pelley on 60 Minutes in April that Sasse “never really thought about things as conservative, liberal. He thought much more about issues, such as the future and the past.” Senate Majority Leader John Thune (R-SD) said Sasse had a “concern not just for today, but for tomorrow and the future” and that he “wasn’t distracted by all the noise that goes around us on a daily basis.” [...]

Levin, who watched Sasse’s tenure closely, offers a candid accounting of his legislative limitations. “It’s true that Ben was not an active legislator, advancing proposals, sponsoring and co-sponsoring legislation, and building coalitions,” he said. “He was active in some key committees, especially the Intelligence Committee, where it seemed to him that active engagement could make a difference. But I think he concluded this was not the case in some of his other committees and that he might be more useful as a critic and observer of the institution. No individual senator gets a lot done right now, and of course, that’s part of the frustration he had.”

But the moments that defined Sasse as a senator were the ones that did not produce legislation, and those are the moments worth examining without the usual condescension.

On the first day of Justice Brett Kavanaugh‘s Supreme Court confirmation hearings in September 2018, the chamber descended almost immediately into the theater that had by then become customary. Protesters disrupted proceedings from the gallery. Democratic senators jockeyed for camera time. The atmosphere was more performance than inquiry. Into this circus, Sasse delivered a 12-minute statement that went viral because it said plainly what almost no one in that room was willing to say: The hysteria around confirmation hearings is a symptom, not the disease. Congress had spent decades delegating its legislative authority to executive agencies and now blamed the courts for filling the vacuum.

“It is predictable now that every confirmation hearing is going to be an overblown, politicized circus,” he said. “And it’s because we’ve accepted a bad new theory about how our three branches of government should work.” The corrective he offered was simple: Congress should pass laws and stand before voters. The executive should enforce those laws. Judges should apply them, not write them. Naturally, no one disagreed out loud.

He delivered a version of the same argument at Justice Amy Coney Barrett‘s hearing in 2020. Neither speech moved the institution. Both captured something true and important about why the institution was failing, and both were widely shared by people who had largely stopped expecting a sitting senator to say anything worth sharing. The Kavanaugh statement was described in this publication at the time as the civics lesson Washington desperately needed. That it needed to be given by a freshman senator to the full Senate Judiciary Committee was Sasse’s real point.

He also understood, more clearly than most of his colleagues, that the Senate’s dysfunction was not incidental but structural. The cameras, he argued, were a bad incentive. The constant travel and time spent fundraising corroded the relationships that make effective governing possible. Most tellingly, he believed that senators had come to treat their office as the purpose of their lives rather than a temporary form of service to something larger. When Pelley noted on 60 Minutes that many senators he knew “would not be able to breathe without that job,” Sasse replied that he feared that was true and that it represented “a much, much deeper problem.” The best title a person could hold, he said, was dad, mom, neighbor, friend. Senator was “a great way to serve. It should be your 11th calling or maybe sixth, but never top.”

When he resigned from the Senate in January 2023 with four years remaining in his term to become president of the University of Florida, many observers treated it as confirmation of the pundit critique: He could not stay the course. The more honest reading is that he had concluded the institution was, as he told Pelley, “very, very unproductive” and that there were better things for him to do. “We didn’t do real things,” he said. “And it felt like the opportunity cost was really high.” He moved to Florida, then stepped down from that post roughly a year and a half later when his wife, Melissa, was diagnosed with epilepsy and required full-time care. The man who had argued that being a senator should rank no higher than sixth on a person’s list of priorities was living accordingly.

Then, on Dec. 23, 2025, he posted the news to X. “Last week I was diagnosed with metastasized, stage-four pancreatic cancer, and am gonna die.” He was 53. Doctors at MD Anderson Cancer Center had cataloged the full spread: lymphoma, vascular cancer, lung cancer, liver cancer, and pancreatic cancer, the point of origin. He had been given three to four months to live. He called it what it was: “Advanced pancreatic is nasty stuff; it’s a death sentence.”

What followed was unexpected, at least to anyone who had expected Sasse to retreat from public life. He launched a podcast called Not Dead Yet. He sat down for a conversation with New York Times columnist Ross Douthat on the latter’s Interesting Times podcast in April, which was released just days after the interview aired and subsequently circulated widely. He appeared on 60 Minutes with Pelley on April 26, his face visibly marked by his medication, a drug called daraxonrasib from Revolution Medicines that had shrunk his tumors by 76% and extended his life by months that were not supposed to exist. He credited the extra time to “providence, prayer, and a miracle drug.”

The Douthat interview was the more intimate of the two conversations and the more remarkable. Douthat asked Sasse at the close whether he felt ready to die. Sasse said he did not feel ready but that he had hope, grounded in his Reformed Christian faith, that he would be with God. The response moved Douthat visibly to tears, something Sasse responded to with his characteristic dry humor. Earlier in the conversation, Sasse reflected on what the disease had given him alongside what it had taken. “I hate pancreatic cancer,” he told Douthat. “I would never wish it on anyone, but I would never want to go back to a time in my life where I didn’t know the prayer of pancreatic cancer. I can’t keep the planets in orbit. I can’t even grow skin on my face.”

The “prayer of pancreatic cancer,” as Sasse uses the phrase, is something like the acknowledgment of dependence that most people spend their healthiest years avoiding. He is not unusual among the terminally ill in arriving at that acknowledgment. He is unusual in the way he has extended it outward, into public argument, into the same institutional critique he was making in November 2015. On 60 Minutes, he was asked what Congress was missing, and he named the artificial intelligence revolution, the future of work, and the complete absence of 2030 or 2050 thinking in either party. Then, without prompting, he returned to the frame he had always used. “The Senate needs to be less like Instagram. The Senate needs to be more deliberative, and that means less smack-down nonsense,” he told Pelley, adding, “The Senate should be plodding, and steady, and boring, and trustworthy.”

It served as a warning that went unheeded, and 11 years later, we’re watching more dysfunction in government than ever before. Sasse, now dying of Stage 4 pancreatic cancer at 54, is still saying the same thing. The diagnosis has not changed the message. It has sharpened it.

Whether Sasse was a “good” or “effective” senator is debatable. Whether Washington currently has enough senators like him is not a close question.

The criticism that followed him throughout his eight-year tenure is almost entirely subjective. His critics on the Left saw a man willing to deplore Trumpism in public while voting with President Donald Trump‘s agenda in practice. His critics on the Right, particularly as the party realigned, saw a posturing institutionalist more interested in making points and serving as a pundit than in getting on board fully with the president’s policies. The most durable version of this critique runs something like: He gave great speeches and passed no significant legislation.

Yuval Levin, founding editor of National Affairs and director of Social, Cultural, and Constitutional Studies at the American Enterprise Institute, largely rejects both sets of criticisms. On the Trump question specifically, Levin is direct: “The notion that there was much more he could have done to hold Trump to account is misdirected and mistaken. He took on Trump when he disagreed with him, and when he thought Trump had exceeded his authority or violated his oath. And unlike most Senate Republican critics of Trump, he ran for reelection and won after doing that.”

The objection to the lack of signature legislation mistakes the Senate’s function for a body it was never designed to be. In the framework Sasse spent years articulating, the Senate is not primarily a factory for producing legislation. It is a deliberative institution meant to apply friction to democratic impulses in the House of Representatives, to slow things down when people want to move too fast, and to force the executive and judiciary to operate within appropriate constitutional limits. By that standard, which is closer to the Founders’ intent than the one applied by Sasse’s critics, he understood and performed his role better than most of his colleagues.

The “pundit” critique oversimplifies his actual record. Sasse served on the Senate Intelligence Committee throughout his tenure, and his work on China there was substantive and largely ahead of the political mainstream. When it was still unfashionable for a Republican to identify Beijing as a generational geopolitical threat rather than an irritating trade partner, Sasse was making that case in the committee rooms that mattered. He had genuine expertise in China’s intelligence operations and, accordingly, used his position, spending considerable time in secure facilities at times when most of his colleagues were busier developing a social media strategy.

Sen. Mark Warner (D-VA), who worked alongside him on the intelligence committee, offered perhaps the most precise characterization of what made Sasse different, telling Scott Pelley on 60 Minutes in April that Sasse “never really thought about things as conservative, liberal. He thought much more about issues, such as the future and the past.” Senate Majority Leader John Thune (R-SD) said Sasse had a “concern not just for today, but for tomorrow and the future” and that he “wasn’t distracted by all the noise that goes around us on a daily basis.” [...]

Levin, who watched Sasse’s tenure closely, offers a candid accounting of his legislative limitations. “It’s true that Ben was not an active legislator, advancing proposals, sponsoring and co-sponsoring legislation, and building coalitions,” he said. “He was active in some key committees, especially the Intelligence Committee, where it seemed to him that active engagement could make a difference. But I think he concluded this was not the case in some of his other committees and that he might be more useful as a critic and observer of the institution. No individual senator gets a lot done right now, and of course, that’s part of the frustration he had.”

But the moments that defined Sasse as a senator were the ones that did not produce legislation, and those are the moments worth examining without the usual condescension.

On the first day of Justice Brett Kavanaugh‘s Supreme Court confirmation hearings in September 2018, the chamber descended almost immediately into the theater that had by then become customary. Protesters disrupted proceedings from the gallery. Democratic senators jockeyed for camera time. The atmosphere was more performance than inquiry. Into this circus, Sasse delivered a 12-minute statement that went viral because it said plainly what almost no one in that room was willing to say: The hysteria around confirmation hearings is a symptom, not the disease. Congress had spent decades delegating its legislative authority to executive agencies and now blamed the courts for filling the vacuum.

“It is predictable now that every confirmation hearing is going to be an overblown, politicized circus,” he said. “And it’s because we’ve accepted a bad new theory about how our three branches of government should work.” The corrective he offered was simple: Congress should pass laws and stand before voters. The executive should enforce those laws. Judges should apply them, not write them. Naturally, no one disagreed out loud.

He delivered a version of the same argument at Justice Amy Coney Barrett‘s hearing in 2020. Neither speech moved the institution. Both captured something true and important about why the institution was failing, and both were widely shared by people who had largely stopped expecting a sitting senator to say anything worth sharing. The Kavanaugh statement was described in this publication at the time as the civics lesson Washington desperately needed. That it needed to be given by a freshman senator to the full Senate Judiciary Committee was Sasse’s real point.

He also understood, more clearly than most of his colleagues, that the Senate’s dysfunction was not incidental but structural. The cameras, he argued, were a bad incentive. The constant travel and time spent fundraising corroded the relationships that make effective governing possible. Most tellingly, he believed that senators had come to treat their office as the purpose of their lives rather than a temporary form of service to something larger. When Pelley noted on 60 Minutes that many senators he knew “would not be able to breathe without that job,” Sasse replied that he feared that was true and that it represented “a much, much deeper problem.” The best title a person could hold, he said, was dad, mom, neighbor, friend. Senator was “a great way to serve. It should be your 11th calling or maybe sixth, but never top.”

When he resigned from the Senate in January 2023 with four years remaining in his term to become president of the University of Florida, many observers treated it as confirmation of the pundit critique: He could not stay the course. The more honest reading is that he had concluded the institution was, as he told Pelley, “very, very unproductive” and that there were better things for him to do. “We didn’t do real things,” he said. “And it felt like the opportunity cost was really high.” He moved to Florida, then stepped down from that post roughly a year and a half later when his wife, Melissa, was diagnosed with epilepsy and required full-time care. The man who had argued that being a senator should rank no higher than sixth on a person’s list of priorities was living accordingly.

Then, on Dec. 23, 2025, he posted the news to X. “Last week I was diagnosed with metastasized, stage-four pancreatic cancer, and am gonna die.” He was 53. Doctors at MD Anderson Cancer Center had cataloged the full spread: lymphoma, vascular cancer, lung cancer, liver cancer, and pancreatic cancer, the point of origin. He had been given three to four months to live. He called it what it was: “Advanced pancreatic is nasty stuff; it’s a death sentence.”

What followed was unexpected, at least to anyone who had expected Sasse to retreat from public life. He launched a podcast called Not Dead Yet. He sat down for a conversation with New York Times columnist Ross Douthat on the latter’s Interesting Times podcast in April, which was released just days after the interview aired and subsequently circulated widely. He appeared on 60 Minutes with Pelley on April 26, his face visibly marked by his medication, a drug called daraxonrasib from Revolution Medicines that had shrunk his tumors by 76% and extended his life by months that were not supposed to exist. He credited the extra time to “providence, prayer, and a miracle drug.”

The Douthat interview was the more intimate of the two conversations and the more remarkable. Douthat asked Sasse at the close whether he felt ready to die. Sasse said he did not feel ready but that he had hope, grounded in his Reformed Christian faith, that he would be with God. The response moved Douthat visibly to tears, something Sasse responded to with his characteristic dry humor. Earlier in the conversation, Sasse reflected on what the disease had given him alongside what it had taken. “I hate pancreatic cancer,” he told Douthat. “I would never wish it on anyone, but I would never want to go back to a time in my life where I didn’t know the prayer of pancreatic cancer. I can’t keep the planets in orbit. I can’t even grow skin on my face.”

The “prayer of pancreatic cancer,” as Sasse uses the phrase, is something like the acknowledgment of dependence that most people spend their healthiest years avoiding. He is not unusual among the terminally ill in arriving at that acknowledgment. He is unusual in the way he has extended it outward, into public argument, into the same institutional critique he was making in November 2015. On 60 Minutes, he was asked what Congress was missing, and he named the artificial intelligence revolution, the future of work, and the complete absence of 2030 or 2050 thinking in either party. Then, without prompting, he returned to the frame he had always used. “The Senate needs to be less like Instagram. The Senate needs to be more deliberative, and that means less smack-down nonsense,” he told Pelley, adding, “The Senate should be plodding, and steady, and boring, and trustworthy.”

by Jay Caruso, Washington Examiner | Read more:

Image: uncredited via

[ed. I knew very little about Ben Sasse before reading an article about daraxonrasib, the new breakthrough drug given to him in his treatment for aggressive pancreatic cancer. It goes without saying that Congress would be an entirely different place if there were more people like him. See also: Pancreatic cancer just met its match (Works in Progress):]

***

"For most of the last half-century, a diagnosis of metastatic pancreatic cancer was a death sentence. In December 2025, former Nebraska Senator Ben Sasse announced he had been diagnosed with stage four pancreatic cancer that had spread to his lungs, liver and other organs, and was given three to four months to live from the time of diagnosis. With little to lose, he enrolled in a clinical trial for an experimental drug. Four months later, he reported a 76 percent reduction in tumor volume, describing the drug, daraxonrasib, as a ‘miracle’. His face, ravaged by a severe skin rash from the treatment, told a more complicated story. Yet he was alive and grateful to be able to talk to his family.A few days after Sasse’s interview, in April 2026, Revolution Medicines announced Phase 3 trial results for daraxonrasib showing the drug had roughly doubled survival in patients with metastatic pancreatic cancer compared to standard chemotherapy. For a disease where median survival has long been measured in months and where little had changed for decades, that result represents a genuine turning point.

But the significance extends beyond pancreatic cancer. Daraxonrasib is among the first drugs in an emerging generation designed to target RAS, a protein implicated in roughly a quarter of all human cancers and long considered beyond reach, in all its mutant forms. And it belongs to a broader class of medicines, molecular glues, that are beginning to show what becomes possible when drugs no longer depend on finding a ready-made pocket in their target. Several compounds in this class are now in clinical development, each probing a different protein that previous generations of drugs could not touch."