I get to visit about two dozen campuses every year, and I meet at least a few teachers like Montás at each of them. I can generally spot the ones with the pure disease, the ones with that raw teacher-fire. Usually, they had some experience early in life when they fell in love with learning. This love then became a ruling passion, and now they fervently seek to share it with their students in the classroom. You can find them at Ivies and at community colleges, at big state schools and small liberal-arts colleges. They are a part of what’s going right in American higher education, the part that critics (like me) don’t write about enough.

These teachers talk of their vocation in lofty terms. They are not there merely to download information into students’ brains, or to steer them toward that job at McKinsey. True humanistic study, they believe, has the power to change lives. They want to walk with students through the biggest questions:

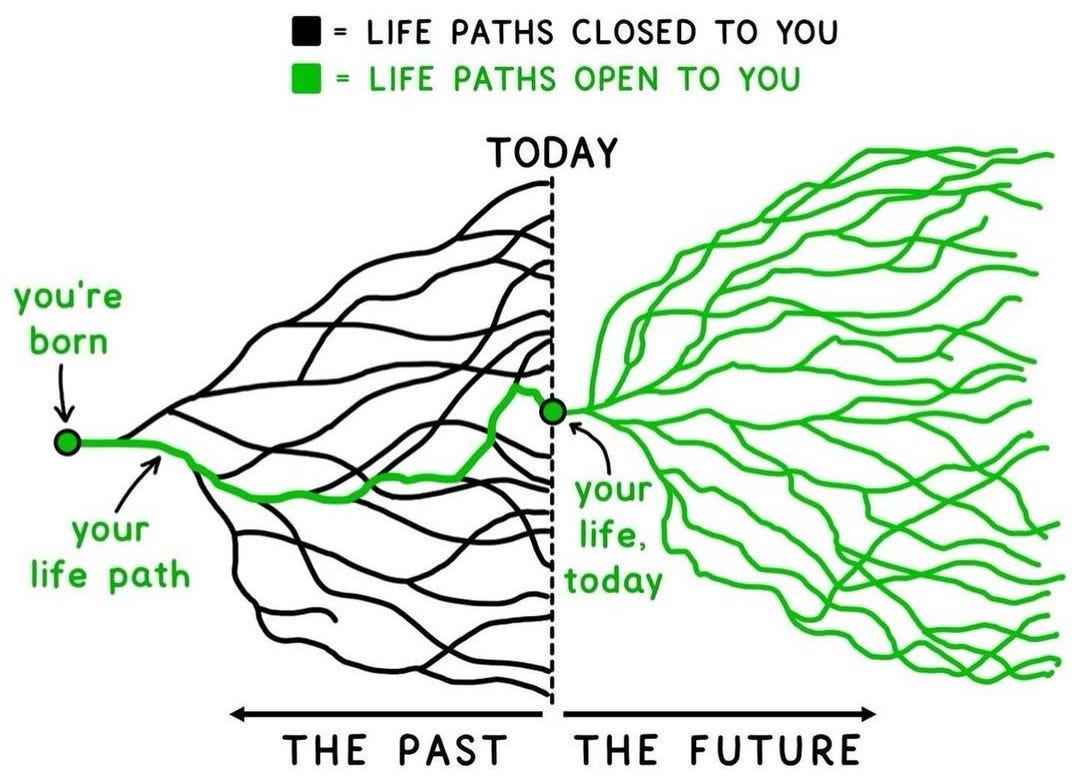

Who am I? What might I become? What is this world I find myself in? If you don’t ask yourself these questions, these teachers say, you risk wasting your life on trivial pursuits, following the conventional path, doing what others want you to do instead of what is truly in your nature. If society doesn’t offer this kind of deep humanistic education, where people learn to seek truth and cultivate a capacity for citizenship, then democracy begins to crumble. “What I’m giving the students is tools for a life of freedom,” Montás says.

These great teachers are the latest inheritors of the humanist tradition. Humanism is a worldview based on an accurate conception of human nature—that we are both deeply broken and wonderfully made. At our worst, humans are capable of cruelty, fascism, and barbarism that no other mammal can match. On the other hand, deep inside of us we possess fundamental longings for beauty, justice, love, and truth, which, when cultivated, can produce spiritual values and human accomplishments breathtaking in their scope.

Life is essentially a battle between our noblest aspirations and our natural egotism. Humanistic education prepares people for this struggle. Yes, schooling also has a practical purpose—to help students make a living and contribute to the economy. But that practical training works best when it is enmeshed within the larger process of forming a fully functioning grown-up—a person armed with knowledge, strength of judgment, force of character, and a thorough familiarity with the spiritual heritage of our civilization. Preprofessional education treats people solely as economic animals; humanistic education also treats them as social and moral animals.

Humanistic teachers do this by ushering students into the Great Conversation—the debate, stretching back centuries, that constitutes the best of what wise people have thought and expressed. These teachers help students encounter real human beings facing the vital challenges of life: Socrates confronting death, Sun Tzu on how to manage conflict, Dante in love, Zadie Smith on living in the boundary between different identities. The Great Conversation represents each generation’s attempt to navigate the dialectics of life, the tension between autonomy and belonging, freedom and order, intimacy and solitude, diversity and cohesion, achievement and equality. The Great Conversation never ends, because there are no final answers to these tensions, just a temporary balance that works for a particular person or culture in a particular context.

By introducing students to rival traditions of thought—Stoicism, Catholic social teaching, conservatism, critical race theory—colleges help students cultivate the beliefs, worldviews, and philosophies that will help them answer the elemental question of adulthood:

What should I do next? By introducing them to history and literature, colleges arm students with wisdom about how humans operate, which is handy knowledge to have. They offer them not only life options but also, more importantly, the ability to choose among them. “Any serious human problem is a hard problem,” Andrew Delbanco, who teaches at Columbia, told me. “The fundamental obligation of a humanities teacher is to try to develop in students an allergy to ideology and certainty. To acknowledge self-doubt.”

But humanistic education is no mere intellectual enterprise. Its primary purpose is not to produce learned people but good people. When teachers do their job, they arouse in their students not only a passion for learning but also a passion to lead a life of generosity and purpose. “The correct analogy for the mind is not a vessel that needs filling, but wood that needs igniting—no more—and then it motivates one towards originality and instills the desire for truth,” Plutarch observed many centuries ago.

Teachers do this by making excellence attractive to the young—excellent lives, excellent ideas, excellent works of art, commerce, and science, and, above all, excellent ideals. The students who are captivated by these ideals find some cause to advance, some social problem to address, some business to start. When confronted by inspiring ideals, many students say:

I care intensely about this, I want to orient my life around this. It’s not only their minds that have been refined but also their desires and ambitions. In a true humanistic education, the French philosopher Jacques Maritain wrote, “the shaping of the will is thoroughly more important to man than the shaping of the intellect.”

Preprofessional education is individualistic and selfish. Such students learn to ask:

How can I outcompete my peers and beat them up the ladder to success? In a humanistic program, by contrast, groups of people gather to form communities of truth, to reason together, to explore life together, to pool their desires and seek the common good.

I find that students flock to humanistic teachers who radiate a sense of urgency. They tell students: We are doing something important here. College is not just frat parties and internships; it’s potentially the most important four years of your life. You can emerge either an anesthetized drone or a person fully curious, fully committed, and fully alive.

I know this kind of education can have this effect because it is the education I got decades ago at the University of Chicago. I knew I could never be as learned as the professors I encountered, but their passion for large topics and great books seemed so impressive to me. I yearned with all my soul to understand the world as best I could, to embark on a lifelong journey of growth. Whatever my ample failings, that yearning, kindled in those classrooms with those books and those teachers, has never gone away. I stumbled unknowingly into a humanistic education, because it was the only college I got into, but I can tell you, it totally worked on me.

Today, the teachers I’m talking about tend to feel like dissidents within the academy, like they are doing something countercultural. That’s because at most schools, humanistic education has been pushed into the remote corners of academic life. It’s not that people woke up one morning and decided to renounce the humanistic ideal, it’s just that other goals popped up. It was easier to fundraise for them, easier to sell them to tuition-paying parents. The idea of forming students into the best version of themselves sort of got left behind. [...]

Mark Edmundson also grew up in a working-class family, in Medford, Massachusetts. He got into college, something no one else in his family had done, and told his father that he might study prelaw, because you could make a decent living as a lawyer. His father, who had barely graduated high school, “detonated,” Edmundson later recalled.

You only go to college once, his father roared,

you better study what genuinely interests you. The rich kids get to study what they want, and you are just as good as any rich kids.Edmundson soon encountered Sigmund Freud and Ralph Waldo Emerson. “They gave words to thoughts and feelings that I had never been able to render myself,” he wrote in his book,

Why Teach? “They shone a light onto the world, and what they saw, suddenly I saw, too.” Edmundson now teaches poetry and literature at the University of Virginia.

“To get an education, you’re probably going to have to fight against the institution you find yourself in—no matter how prestigious it might be,” Edmundson once told an audience of students. “In fact, the more prestigious the school, the more you’ll probably have to push.”

The forces arrayed against humanistic learning are many:

Image: The Atlantic: Source: Laurie Michaels/Bridgeman Images[ed. Contrast this with someone (

below), who believes that

colleges should be modeled after OnlyFans, and that hyper-specialization ("edge" degrees where AI will supposedly be less adept) are the future. I know which curriculum I'd choose.]