Sunday, April 6, 2014

The New Normal

[ed. Remember folks, you heard it here first.]

2. A sociocultural concept, c. 2013, having nothing to do with fashion, that concerns hipster types learning to get over themselves, sometimes even enough to enjoy mainstream pleasures like football along with the rest of the crowd.

3. An Internet meme that turned into a massive in-joke that the news media keeps falling for. (See below).

A little more than a month ago, the word “normcore” spread like a brush fire across the fashionable corners of the Internet, giving name to a supposed style trend where dressing like a tourist — non-ironic sweatshirts, white sneakers and Jerry Seinfeld-like dad jeans — is the ultimate fashion statement.

As widely interpreted, normcore was mall chic for people — mostly the downtown/Brooklyn creative crowd — who would not be caught dead in a shopping mall. Forget Martin Van Buren mutton chops; the way to stand out on the streets of Bushwick in 2014, apparently, is in a pair of Gap cargo shorts, a Coors Light T-shirt and a Nike golf hat. (...)

A style revolution? A giant in-joke? At this point, it hardly seems to matter. After a month-plus blizzard of commentary, normcore may be a hypothetical movement that turns into a real movement through the power of sheer momentum.

Even so, the fundamental question — is normcore real? — remains a matter of debate, even among the people who foisted the term upon the world. (...)

Like a mass sociological experiment, the question now is whether repetition, at a certain point, makes reality. Even those who coined the phrase concede that normcore has taken on a life of its own. “If you look through #normcore on Twitter or Instagram, people are definitely posting pictures of that look,” said Gregory Fong, a K-Hole founder. “Whether they believe it’s real or a joke, it’s impossible to say, but it’s there and it’s happening.”

It certainly seems to be. Sort of.

by Alex Williams, NY Times | Read more:

Image: uncredited

“It is an interesting subject: superfluous people in the service of brute power. A developed, stable, organized society is a community of clearly delineated and defined roles, something that cannot be said of the majority of third-world cities. Their neighborhoods are populated in large part by an unformed, fluid element, lacking precise classification, without position, place, or purpose. At any moment and for whatever reason, these people, to whom no one pays attention, whom no one needs, can form into a crowd, a throng, a mob, which has an opinion about everything, has time for everything, and would like to participate in something, mean something.

“All dictatorships take advantage of this idle magma. They don’t even need to maintain an expensive army of full-time policemen. It suffices to reach out to these people searching for some significance in life. Give them the sense that they can be of use, that someone is counting on them for something, that they have been noticed, that they have a purpose.”

Ryszard Kapuściński, from “Problem, No Problem.”

Photography: Judy Dater

via:

A Small Gain in Yardage

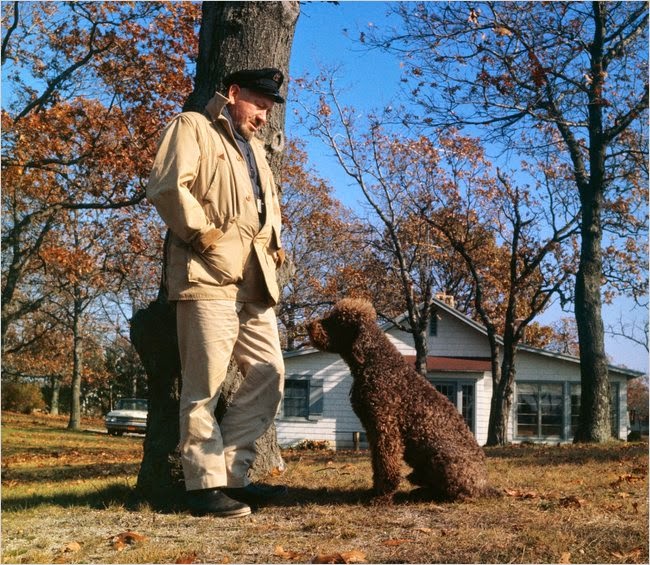

Who doesn't like to be a center for concern? A kind of second childhood falls on so many men. They trade their violence for the promise of a small increase of life span. In effect, the head of the house becomes the youngest child. And I have searched myself for this possibility with a kind of horror. For I have always lived violently, drunk hugely, eaten too much or not at all, slept around the clock or missed two nights of sleeping, worked too hard and too long in glory, or slobbed for a time in utter laziness. I've lifted, pulled, chopped, climbed, made love with joy and taken my hangovers as a consequence, not as a punishment. I did not want to surrender fierceness for a small gain in yardage... And in my own life, I am not willing to trade quality for quantity.

~ John Steinbeck, Travels With Charley

image: Bettmann/Corbis via:

The Perfect Lobby: How One Industry Captured Washington, DC

Many of America’s for-profit colleges have proven themselves a bad deal for the students lured by their enticing promises—as well as for US taxpayers, who subsidize these institutions with ten of billions annually in federal student aid.

More than half of the students who enroll in for-profit colleges—many of them veterans, single mothers, and other low- and middle-income people aiming for jobs like medical technician, diesel mechanic or software coder—drop out within about four months. Many of these colleges have been caught using deceptive advertising and misleading prospective students about program costs and job placement rates. Although the for-profits promise that their programs are affordable, the real cost can be nearly double that of Harvard or Stanford. But the quality of the programs are often weak, so even students who manage to graduate often struggle to find jobs beyond the Office Depot shifts they previously held. The US Department of Education recently reported that 72 percent of the for-profit college programs it analyzed produced graduates who, on average, earned less than high school dropouts.

More than half of the students who enroll in for-profit colleges—many of them veterans, single mothers, and other low- and middle-income people aiming for jobs like medical technician, diesel mechanic or software coder—drop out within about four months. Many of these colleges have been caught using deceptive advertising and misleading prospective students about program costs and job placement rates. Although the for-profits promise that their programs are affordable, the real cost can be nearly double that of Harvard or Stanford. But the quality of the programs are often weak, so even students who manage to graduate often struggle to find jobs beyond the Office Depot shifts they previously held. The US Department of Education recently reported that 72 percent of the for-profit college programs it analyzed produced graduates who, on average, earned less than high school dropouts.

Today, 13 percent of all college students attend for-profit colleges, on campuses and online—but these institutions account for 47 percent of student loan defaults. For-profit schools are driving a national student debt crisis that has reached $1.2 trillion in borrowing. They absorb a quarter of all federal student aid—more than $30 billion annually—diverting sums from better, more affordable programs at nonprofit and public colleges. Many for-profit college companies, including most of the biggest ones, get almost 90 percent of their revenue from taxpayers.

So why does Washington keep the money flowing?

It’s not that politicians are unaware of the problem. One person who clearly understands the human and financial costs of the for-profit college industry is President Obama. Speaking at Fort Stewart, Georgia, in April 2012, the president told the soldiers that some schools are "trying to swindle and hoodwink" them, because they only “care about the cash." Speaking off the cuff last year, Obama warned that some for-profit colleges were failing to provide the certification that students thought they would get. In the end, he said, the students "can't find a job. They default.... Their credit is ruined, and the for-profit institution is making out like a bandit." And, he noted, when students default on their federally backed loans, “the taxpayer ends up holding the bag.”

On March 14, the administration released its much-anticipated draft "gainful employment" rule, aimed at ending taxpayer support for career college programs that consistently leave students with insurmountable debt.

This rule would have a real impact: it would eventually cut off federal student grants and loans to the very worst career education programs, whose students consistently earn far too little to pay down their college loans, or whose students have very high rates of loan defaults.

But advocates believe that the standards in the proposed rule are too weak; they would leave standing many programs that harm a high percentage of their students. The rule , they argue, doesn’t do enough to ensure that federal aid goes only to programs that actually help students prepare for careers. But it isn’t soft enough to satisfy APSCU, the trade association representing for-profit colleges, which denounced the rule as the flawed product of a “sham” process. The administration gave the public until May 27 to comment before issuing a final rule.

APSCU and other lobbyists for the for-profit college industry are now out in full force, hoping to extract from the gainful employment rule its remaining teeth. Supporters of stronger standards to protect students from industry predation—among them the NAACP, the Consumers Union, Iraq and Afghanistan Veterans of America, the Service Employees International Union and others (including, full disclosure, myself)—will push back, but they have far fewer financial resources for the battle. This is a crucial round in a long fight, one in which the industry has already displayed a willingness to spend tens of millions to manipulate the machinery of modern influence-peddling—and with a remarkable degree of success.

Because most of this lobbying money is financed by taxpayers, this is a story of how Washington itself created a monster—one so big that it can work its will on the political system even when the facts cry out for reform and accountability.

More than half of the students who enroll in for-profit colleges—many of them veterans, single mothers, and other low- and middle-income people aiming for jobs like medical technician, diesel mechanic or software coder—drop out within about four months. Many of these colleges have been caught using deceptive advertising and misleading prospective students about program costs and job placement rates. Although the for-profits promise that their programs are affordable, the real cost can be nearly double that of Harvard or Stanford. But the quality of the programs are often weak, so even students who manage to graduate often struggle to find jobs beyond the Office Depot shifts they previously held. The US Department of Education recently reported that 72 percent of the for-profit college programs it analyzed produced graduates who, on average, earned less than high school dropouts.

More than half of the students who enroll in for-profit colleges—many of them veterans, single mothers, and other low- and middle-income people aiming for jobs like medical technician, diesel mechanic or software coder—drop out within about four months. Many of these colleges have been caught using deceptive advertising and misleading prospective students about program costs and job placement rates. Although the for-profits promise that their programs are affordable, the real cost can be nearly double that of Harvard or Stanford. But the quality of the programs are often weak, so even students who manage to graduate often struggle to find jobs beyond the Office Depot shifts they previously held. The US Department of Education recently reported that 72 percent of the for-profit college programs it analyzed produced graduates who, on average, earned less than high school dropouts.Today, 13 percent of all college students attend for-profit colleges, on campuses and online—but these institutions account for 47 percent of student loan defaults. For-profit schools are driving a national student debt crisis that has reached $1.2 trillion in borrowing. They absorb a quarter of all federal student aid—more than $30 billion annually—diverting sums from better, more affordable programs at nonprofit and public colleges. Many for-profit college companies, including most of the biggest ones, get almost 90 percent of their revenue from taxpayers.

So why does Washington keep the money flowing?

It’s not that politicians are unaware of the problem. One person who clearly understands the human and financial costs of the for-profit college industry is President Obama. Speaking at Fort Stewart, Georgia, in April 2012, the president told the soldiers that some schools are "trying to swindle and hoodwink" them, because they only “care about the cash." Speaking off the cuff last year, Obama warned that some for-profit colleges were failing to provide the certification that students thought they would get. In the end, he said, the students "can't find a job. They default.... Their credit is ruined, and the for-profit institution is making out like a bandit." And, he noted, when students default on their federally backed loans, “the taxpayer ends up holding the bag.”

On March 14, the administration released its much-anticipated draft "gainful employment" rule, aimed at ending taxpayer support for career college programs that consistently leave students with insurmountable debt.

This rule would have a real impact: it would eventually cut off federal student grants and loans to the very worst career education programs, whose students consistently earn far too little to pay down their college loans, or whose students have very high rates of loan defaults.

But advocates believe that the standards in the proposed rule are too weak; they would leave standing many programs that harm a high percentage of their students. The rule , they argue, doesn’t do enough to ensure that federal aid goes only to programs that actually help students prepare for careers. But it isn’t soft enough to satisfy APSCU, the trade association representing for-profit colleges, which denounced the rule as the flawed product of a “sham” process. The administration gave the public until May 27 to comment before issuing a final rule.

APSCU and other lobbyists for the for-profit college industry are now out in full force, hoping to extract from the gainful employment rule its remaining teeth. Supporters of stronger standards to protect students from industry predation—among them the NAACP, the Consumers Union, Iraq and Afghanistan Veterans of America, the Service Employees International Union and others (including, full disclosure, myself)—will push back, but they have far fewer financial resources for the battle. This is a crucial round in a long fight, one in which the industry has already displayed a willingness to spend tens of millions to manipulate the machinery of modern influence-peddling—and with a remarkable degree of success.

Because most of this lobbying money is financed by taxpayers, this is a story of how Washington itself created a monster—one so big that it can work its will on the political system even when the facts cry out for reform and accountability.

by David Halperin, The Nation | Read more:

Image: Brian Ach / AP Images for DeVry UniversityDebunking Alcoholics Anonymous: Behind the Myths of Recovery

Myths have a way of coming to resemble facts through repetition alone. This is as true in science and psychology as in politics and history. Today few areas of public health are more riven with unsubstantiated claims than the field of addiction.

Alcoholics Anonymous has been instrumental in the widespread adoption of many such myths. The organization’s Twelve Steps, its expressions, and unique lexicon have found their way into the public discourse in a way that few other “brands” could ever match. So ingrained are these ideas, in fact, that many Americans would be hard-pressed to identify which came from AA and which from scientific investigation.

Alcoholics Anonymous has been instrumental in the widespread adoption of many such myths. The organization’s Twelve Steps, its expressions, and unique lexicon have found their way into the public discourse in a way that few other “brands” could ever match. So ingrained are these ideas, in fact, that many Americans would be hard-pressed to identify which came from AA and which from scientific investigation.

The unfortunate part of this cultural penetration is that many addiction myths are harmful or even destructive, perpetuating false ideas about who addicts are, what addiction is, and what is needed to quit for good. In this chapter, I’d like to take a look at a few of these myths and examine some of the ways they impair efforts at adopting a more effective approach.

MYTH #1: YOU HAVE TO “HIT BOTTOM” BEFORE YOU CAN GET WELL

This common myth essentially says that an addict needs to reach a point of absolute loss or despair before he or she can begin to climb back toward a safe and productive life.

The most common objection to this myth is simple logic: nobody can possibly know where their “bottom” is until they identify it in retrospect. One person’s lowest point could be a night on the street, while another’s could be a bad day at work or even a small personal humiliation. It’s not unusual for one “bottom” to make way for another following a relapse. Without a clear definition, this is a concept that could be useful only in hindsight, if it is useful at all.

A bigger problem with this notion is the idea that addiction is in some fundamental way just a matter of stubbornness or stupidity—that is, addicts cannot recover until they are shown the consequences of their actions in a forceful enough way. This is a dressed-up version of the idea that addiction is a conscious choice and that stopping is a matter of recognizing the damage it causes. I have said it before, but it bears repeating: if consequences alone were enough to make someone stop repeating an addictive behavior, there would be no addicts. One of the defining agonies of addiction is that people can’t stop despite being well aware of the devastating consequences. That millions of people who have lost their jobs, marriages, and families are still unable to quit should be a clear indication that loss and despair, even in overwhelming quantities, aren’t enough to cure addiction. Conversely, many addicts stop their behavior at a point where they have not hit bottom in any sense.

There is a moralistic subtext at work here as well. The notion that addicts have to hit bottom suggests that they are too selfish to quit until they have paid a steep enough personal price. Once again we get an echo of the medieval notion of penance here: through suffering comes purity. Addicts no more need to experience devastating personal loss than does anyone else with a problem. Yes, it can be useful when a single moment helps to crystallize that one has a problem, but the fantasy that this moment must be especially painful is simply nonsensical.

Finally, the dogmatic insistence that addicts hit bottom is often used to excuse poor treatment. Treaters who are unable to help often scold addicts by telling them that they just aren’t ready yet and that they should come back once they’ve hit bottom and become ready to do the work. This is little more than a convenient dodge for ineffectual care, and a needless burden to place on the shoulders of addicts.

Alcoholics Anonymous has been instrumental in the widespread adoption of many such myths. The organization’s Twelve Steps, its expressions, and unique lexicon have found their way into the public discourse in a way that few other “brands” could ever match. So ingrained are these ideas, in fact, that many Americans would be hard-pressed to identify which came from AA and which from scientific investigation.

Alcoholics Anonymous has been instrumental in the widespread adoption of many such myths. The organization’s Twelve Steps, its expressions, and unique lexicon have found their way into the public discourse in a way that few other “brands” could ever match. So ingrained are these ideas, in fact, that many Americans would be hard-pressed to identify which came from AA and which from scientific investigation.The unfortunate part of this cultural penetration is that many addiction myths are harmful or even destructive, perpetuating false ideas about who addicts are, what addiction is, and what is needed to quit for good. In this chapter, I’d like to take a look at a few of these myths and examine some of the ways they impair efforts at adopting a more effective approach.

MYTH #1: YOU HAVE TO “HIT BOTTOM” BEFORE YOU CAN GET WELL

This common myth essentially says that an addict needs to reach a point of absolute loss or despair before he or she can begin to climb back toward a safe and productive life.

The most common objection to this myth is simple logic: nobody can possibly know where their “bottom” is until they identify it in retrospect. One person’s lowest point could be a night on the street, while another’s could be a bad day at work or even a small personal humiliation. It’s not unusual for one “bottom” to make way for another following a relapse. Without a clear definition, this is a concept that could be useful only in hindsight, if it is useful at all.

A bigger problem with this notion is the idea that addiction is in some fundamental way just a matter of stubbornness or stupidity—that is, addicts cannot recover until they are shown the consequences of their actions in a forceful enough way. This is a dressed-up version of the idea that addiction is a conscious choice and that stopping is a matter of recognizing the damage it causes. I have said it before, but it bears repeating: if consequences alone were enough to make someone stop repeating an addictive behavior, there would be no addicts. One of the defining agonies of addiction is that people can’t stop despite being well aware of the devastating consequences. That millions of people who have lost their jobs, marriages, and families are still unable to quit should be a clear indication that loss and despair, even in overwhelming quantities, aren’t enough to cure addiction. Conversely, many addicts stop their behavior at a point where they have not hit bottom in any sense.

There is a moralistic subtext at work here as well. The notion that addicts have to hit bottom suggests that they are too selfish to quit until they have paid a steep enough personal price. Once again we get an echo of the medieval notion of penance here: through suffering comes purity. Addicts no more need to experience devastating personal loss than does anyone else with a problem. Yes, it can be useful when a single moment helps to crystallize that one has a problem, but the fantasy that this moment must be especially painful is simply nonsensical.

Finally, the dogmatic insistence that addicts hit bottom is often used to excuse poor treatment. Treaters who are unable to help often scold addicts by telling them that they just aren’t ready yet and that they should come back once they’ve hit bottom and become ready to do the work. This is little more than a convenient dodge for ineffectual care, and a needless burden to place on the shoulders of addicts.

by Dr. Lance Dodes and Zachary Dodes, Salon | Read more:

Image: DonNichols via iStock/SalonSaturday, April 5, 2014

Just Cheer, Baby

Lacy T. was born to cheer. When she dances, she moves at the speed of a shook-up pompom. When she talks, it's in a peppy Southern drawl that makes everything sound as sweet as sugar. And when she poses, she is the image of a classic pinup: big hair, tiny waist and full lips that part to reveal a megawatt smile.

Naturally, when Lacy auditioned for the Oakland Raiderettes a year ago, she made the squad. And the Raiderettes quickly set to work remaking her in their image. She would be known exclusively by her first name and last initial -- a tradition across the NFL, ostensibly designed to protect its sideline stars from prying fans. The squad director handed Lacy, now 28, a sparkling pirate-inspired crop top, a copy of the team's top-secret "bible" -- which guides Raiderettes in everything from folding a dinner napkin correctly to spurning the advances of a married Raiders player -- and specific instructions for maintaining a head-to-toe Raiderettes look. The team presented Lacy with a photograph of herself next to a shot of actress Rachel McAdams, who would serve as Lacy's "celebrity hairstyle look-alike." Lacy was mandated to expertly mimic McAdams' light reddish-brown shade and 11/2-inch-diameter curls, starting with a $150 dye job at a squad-approved salon. Her fingers and toes were to be french-manicured at all times. Her skin was to maintain an artificial sun-kissed hue into the winter months. Her thighs would always be covered in dancing tights, and false lashes would be perpetually glued to her eyelids. Periodically, she'd have to step on a scale to prove that her weight had not inched more than 4 pounds above her 103-pound baseline.

Naturally, when Lacy auditioned for the Oakland Raiderettes a year ago, she made the squad. And the Raiderettes quickly set to work remaking her in their image. She would be known exclusively by her first name and last initial -- a tradition across the NFL, ostensibly designed to protect its sideline stars from prying fans. The squad director handed Lacy, now 28, a sparkling pirate-inspired crop top, a copy of the team's top-secret "bible" -- which guides Raiderettes in everything from folding a dinner napkin correctly to spurning the advances of a married Raiders player -- and specific instructions for maintaining a head-to-toe Raiderettes look. The team presented Lacy with a photograph of herself next to a shot of actress Rachel McAdams, who would serve as Lacy's "celebrity hairstyle look-alike." Lacy was mandated to expertly mimic McAdams' light reddish-brown shade and 11/2-inch-diameter curls, starting with a $150 dye job at a squad-approved salon. Her fingers and toes were to be french-manicured at all times. Her skin was to maintain an artificial sun-kissed hue into the winter months. Her thighs would always be covered in dancing tights, and false lashes would be perpetually glued to her eyelids. Periodically, she'd have to step on a scale to prove that her weight had not inched more than 4 pounds above her 103-pound baseline.

Long before Lacy's boots ever hit the gridiron grass, "I was just hustling," she says. "Very early on, I was spending money like crazy." The salon visits, the makeup, the eyelashes, the tights were almost exclusively paid out of her own pocket. The finishing touch of the Raiderettes' onboarding process was a contract requiring Lacy to attend thrice-weekly practices, dozens of public appearances, photo shoots, fittings and nine-hour shifts at Raiders home games, all in return for a lump sum of $1,250 at the conclusion of the season. (A few days before she filed suit, the team increased her pay to $2,780.) All rights to Lacy's image were surrendered to the Raiders. With fines for everything from forgetting pompoms to gaining weight, the handbook warned that it was entirely possible to "find yourself with no salary at all at the end of the season."

Like hundreds of women who have cheered for the Raiders since 1961, Lacy signed the contract. Unlike the rest of them, she also showed it to a lawyer.

Naturally, when Lacy auditioned for the Oakland Raiderettes a year ago, she made the squad. And the Raiderettes quickly set to work remaking her in their image. She would be known exclusively by her first name and last initial -- a tradition across the NFL, ostensibly designed to protect its sideline stars from prying fans. The squad director handed Lacy, now 28, a sparkling pirate-inspired crop top, a copy of the team's top-secret "bible" -- which guides Raiderettes in everything from folding a dinner napkin correctly to spurning the advances of a married Raiders player -- and specific instructions for maintaining a head-to-toe Raiderettes look. The team presented Lacy with a photograph of herself next to a shot of actress Rachel McAdams, who would serve as Lacy's "celebrity hairstyle look-alike." Lacy was mandated to expertly mimic McAdams' light reddish-brown shade and 11/2-inch-diameter curls, starting with a $150 dye job at a squad-approved salon. Her fingers and toes were to be french-manicured at all times. Her skin was to maintain an artificial sun-kissed hue into the winter months. Her thighs would always be covered in dancing tights, and false lashes would be perpetually glued to her eyelids. Periodically, she'd have to step on a scale to prove that her weight had not inched more than 4 pounds above her 103-pound baseline.

Naturally, when Lacy auditioned for the Oakland Raiderettes a year ago, she made the squad. And the Raiderettes quickly set to work remaking her in their image. She would be known exclusively by her first name and last initial -- a tradition across the NFL, ostensibly designed to protect its sideline stars from prying fans. The squad director handed Lacy, now 28, a sparkling pirate-inspired crop top, a copy of the team's top-secret "bible" -- which guides Raiderettes in everything from folding a dinner napkin correctly to spurning the advances of a married Raiders player -- and specific instructions for maintaining a head-to-toe Raiderettes look. The team presented Lacy with a photograph of herself next to a shot of actress Rachel McAdams, who would serve as Lacy's "celebrity hairstyle look-alike." Lacy was mandated to expertly mimic McAdams' light reddish-brown shade and 11/2-inch-diameter curls, starting with a $150 dye job at a squad-approved salon. Her fingers and toes were to be french-manicured at all times. Her skin was to maintain an artificial sun-kissed hue into the winter months. Her thighs would always be covered in dancing tights, and false lashes would be perpetually glued to her eyelids. Periodically, she'd have to step on a scale to prove that her weight had not inched more than 4 pounds above her 103-pound baseline.Long before Lacy's boots ever hit the gridiron grass, "I was just hustling," she says. "Very early on, I was spending money like crazy." The salon visits, the makeup, the eyelashes, the tights were almost exclusively paid out of her own pocket. The finishing touch of the Raiderettes' onboarding process was a contract requiring Lacy to attend thrice-weekly practices, dozens of public appearances, photo shoots, fittings and nine-hour shifts at Raiders home games, all in return for a lump sum of $1,250 at the conclusion of the season. (A few days before she filed suit, the team increased her pay to $2,780.) All rights to Lacy's image were surrendered to the Raiders. With fines for everything from forgetting pompoms to gaining weight, the handbook warned that it was entirely possible to "find yourself with no salary at all at the end of the season."

Like hundreds of women who have cheered for the Raiders since 1961, Lacy signed the contract. Unlike the rest of them, she also showed it to a lawyer.

by Amanda Hess, ESPN | Read more:

Image: Chris McPherson

New York 2050: Connected, But Alone

There are so many of us now.

Two centuries ago, the urban bourgeoisie of Haussmann's Paris used the grands boulevards, the shopping arcades, as the stage on which to perform the identities they had chosen for themselves. We are no longer so limited.

People like us don't use streets. We can, we must, use space for houses. The broad sidewalks of Madison Avenue, the soft green of Central Park: all these, if we see them at all, are only shadows between skyscrapers: patches between the towers that have taken the place of crosswalks, of trees.

People like us don't use streets. We can, we must, use space for houses. The broad sidewalks of Madison Avenue, the soft green of Central Park: all these, if we see them at all, are only shadows between skyscrapers: patches between the towers that have taken the place of crosswalks, of trees.

After all, we don't need them. Social media – now a quaintly antiquarian term, like “speakeasy” or “rave” – has been supplanted by an even bolder form of avatar-making. People like us needn't leave the house, after all. We send our proxy-selves by video or by holograph to do our bidding for us. Selves we can design, control. The Danish philosopher Soren Kierkegaard once analysed the anxiety of choice, of the dizziness engendered by an infinite number of possibilities. “Because it is possible to create,” he wrote, “creating one’s self, willing to be one’s self … one has anxiety. One would have no anxiety if there were no possibility whatever.” We know this is false. Of course we can create ourselves. We can look, act, enchant others, any way we like. We make ourselves in our own images.

At least, people like us do.

People like us have no limits. With so many avatars, we have no fear of missing out. We can be everywhere, every time. We simultaneously attend cocktail parties, dinners, fashionable literary readings, while going on five or ten dates with five or ten different men, who may or may not be on dates with as many women. All it takes is a click, and we've grown adept at multi-tasking. We sit within our white walls, in our windowless rooms, and project onto the barest of surfaces the most dizzying imagined backgrounds: Fifth Avenue as it once was, the Rainbow Room still intact, Coney Island before it sank into the sea. We project our friends and lovers, as they want us to see them, and know that somewhere, on their ceilings, we exist: the way we've always wanted to be.

The technology was invented in Silicon Valley, but it was in New York that it first caught on. In New York, where we were most wild for self-invention, where we were most afraid of missing out, where we were so afraid of being only one, lost in a crowd.

We fill up our hard drives with as many avatars, as many images, as we can afford. Demand has driven up the price of computer memory, and now this is where the majority of our money goes. The richest can afford as many selves as they like – any and all genders, appearances, orientations; our celebrities attend as many as a hundred parties at once. Though most of us still have to choose. We might have five or six avatars, if we're comfortably middle-class.

Still, we worry we've chosen wrong.

by Tara Isabella Burton, BBC | Read more:

Two centuries ago, the urban bourgeoisie of Haussmann's Paris used the grands boulevards, the shopping arcades, as the stage on which to perform the identities they had chosen for themselves. We are no longer so limited.

People like us don't use streets. We can, we must, use space for houses. The broad sidewalks of Madison Avenue, the soft green of Central Park: all these, if we see them at all, are only shadows between skyscrapers: patches between the towers that have taken the place of crosswalks, of trees.

People like us don't use streets. We can, we must, use space for houses. The broad sidewalks of Madison Avenue, the soft green of Central Park: all these, if we see them at all, are only shadows between skyscrapers: patches between the towers that have taken the place of crosswalks, of trees.After all, we don't need them. Social media – now a quaintly antiquarian term, like “speakeasy” or “rave” – has been supplanted by an even bolder form of avatar-making. People like us needn't leave the house, after all. We send our proxy-selves by video or by holograph to do our bidding for us. Selves we can design, control. The Danish philosopher Soren Kierkegaard once analysed the anxiety of choice, of the dizziness engendered by an infinite number of possibilities. “Because it is possible to create,” he wrote, “creating one’s self, willing to be one’s self … one has anxiety. One would have no anxiety if there were no possibility whatever.” We know this is false. Of course we can create ourselves. We can look, act, enchant others, any way we like. We make ourselves in our own images.

At least, people like us do.

People like us have no limits. With so many avatars, we have no fear of missing out. We can be everywhere, every time. We simultaneously attend cocktail parties, dinners, fashionable literary readings, while going on five or ten dates with five or ten different men, who may or may not be on dates with as many women. All it takes is a click, and we've grown adept at multi-tasking. We sit within our white walls, in our windowless rooms, and project onto the barest of surfaces the most dizzying imagined backgrounds: Fifth Avenue as it once was, the Rainbow Room still intact, Coney Island before it sank into the sea. We project our friends and lovers, as they want us to see them, and know that somewhere, on their ceilings, we exist: the way we've always wanted to be.

The technology was invented in Silicon Valley, but it was in New York that it first caught on. In New York, where we were most wild for self-invention, where we were most afraid of missing out, where we were so afraid of being only one, lost in a crowd.

We fill up our hard drives with as many avatars, as many images, as we can afford. Demand has driven up the price of computer memory, and now this is where the majority of our money goes. The richest can afford as many selves as they like – any and all genders, appearances, orientations; our celebrities attend as many as a hundred parties at once. Though most of us still have to choose. We might have five or six avatars, if we're comfortably middle-class.

Still, we worry we've chosen wrong.

by Tara Isabella Burton, BBC | Read more:

Image: Science Photo Library

Free of One’s Melancholy Self

When Jordan Belfort—played by Leonardo DiCaprio in a truly masterful moment of full-body acting—wrenches himself from the steps of a country club into a white Lamborghini that he drives to his mansion, moviegoers, having already watched some two hours of Martin Scorsese’s The Wolf of Wall Street, are meant to be horrified. His addiction to quaaludes (and money, and cocaine, and sex, and giving motivational speeches) has rendered him not just a metaphorical monster but a literal one. He lunges at his pregnant wife and his best friend, played by Jonah Hill, and equally high; he smashes everything in his path, both with his body and with the aforementioned Ferrari. He gurgles and drools and mangles even monosyllabic words. He’s Frankenstein in a polo shirt.

.jpg) But what of the movie’s glossier scenes? The one where Belfort and his paramour engage in oral sex while speeding down a highway? Where he and his friends and colleagues are on boats and planes and at pool parties totally free of the inhibitions that keep most of us adhering to the laws of common decency? What about the parts that look fun?

But what of the movie’s glossier scenes? The one where Belfort and his paramour engage in oral sex while speeding down a highway? Where he and his friends and colleagues are on boats and planes and at pool parties totally free of the inhibitions that keep most of us adhering to the laws of common decency? What about the parts that look fun?

Everyone I spoke to post-Wolf (at least, everyone who liked it) rapturously praised Terence Winter’s absurd dialogue, DiCaprio’s magnetism, Scorsese’s eye for beautiful grotesquerie. Most of them also included a half-whispered, wide-eyed aside: What exactly are quaaludes, and where can we get some?

Often prescribed to nervous housewives, a quaalude was something between a sleeping pill and a sedative. First synthesized in the late fifties, by 1965 ’ludes were being manufactured by William H. Rorer Inc., a Pennsylvania pharmaceutical company. The name “quaalude” is both a play on “Maalox,” another product manufactured by William H. Rorer Inc., and a synthesis of the phrase “quiet interlude”—a concept so simple and often so out of reach. Just whisper “quiet interlude” to yourself a few times. Seductive, no? It’s the pill in the “take a pill and lie down” directive thousands of Don Drapers gave their Bettys.

Of course, housewives have children who grow into curious teenagers, and medicine-cabinet explorations led the children of boomers to discover a new use for the drug. Most sedatives are designed to take you away within fifteen minutes, but—as Belfort explains in a lengthy paean to ’ludes—fighting the high leads one into a state almost universally described as euphoria. “It was hard to imagine how anything could feel better than this. Any problems you had were immediately forgotten or irrelevant,” said one person who came of age when ’ludes were still floating around. “Nothing felt like being on quaaludes except being on quaaludes.”

William James thought the world was made up of two halves: the healthy-minded, or those who could “avert one’s attention from evil, and live simply in the light of good … quite free of one’s melancholy self,” and the sick-souled, or morbid-minded, “grubbing in rat-holes instead of living in the light; with their manufacture of fears, and preoccupation with every unwholesome kind of misery, there is something almost obscene about these children of wrath.” In the end, to be of morbid mind is, according to James, the better option—the harsh realities the healthy-minded cheerily repel “may after all be the best key to life’s significance, and possibly the only openers of our eyes to the deepest levels of truth.” Still, it’s not easy, being a sick soul. James is one of the first persons to pop up in a search of “neurasthenia,” the catch-all term for those who suffered from nervousness, exhaustion, and overthinking in the nineteenth century.

Maybe William James needed a quiet interlude. Maybe something like a quaalude, something that makes you feel like yourself without any of the stress of actually being yourself, can be, for a healthy mind looking to spice up a Saturday night, something that enhances dancing and drinking and sex and honesty. But for someone like Jordan Belfort—whose desires beget more desires until he isn’t sure whether they’re real or if he’s wanting just to want—quaaludes were probably more an occupational necessity than a recreational getaway.

by Angela Serratore, Paris Review | Read more:

.jpg) But what of the movie’s glossier scenes? The one where Belfort and his paramour engage in oral sex while speeding down a highway? Where he and his friends and colleagues are on boats and planes and at pool parties totally free of the inhibitions that keep most of us adhering to the laws of common decency? What about the parts that look fun?

But what of the movie’s glossier scenes? The one where Belfort and his paramour engage in oral sex while speeding down a highway? Where he and his friends and colleagues are on boats and planes and at pool parties totally free of the inhibitions that keep most of us adhering to the laws of common decency? What about the parts that look fun?Everyone I spoke to post-Wolf (at least, everyone who liked it) rapturously praised Terence Winter’s absurd dialogue, DiCaprio’s magnetism, Scorsese’s eye for beautiful grotesquerie. Most of them also included a half-whispered, wide-eyed aside: What exactly are quaaludes, and where can we get some?

Often prescribed to nervous housewives, a quaalude was something between a sleeping pill and a sedative. First synthesized in the late fifties, by 1965 ’ludes were being manufactured by William H. Rorer Inc., a Pennsylvania pharmaceutical company. The name “quaalude” is both a play on “Maalox,” another product manufactured by William H. Rorer Inc., and a synthesis of the phrase “quiet interlude”—a concept so simple and often so out of reach. Just whisper “quiet interlude” to yourself a few times. Seductive, no? It’s the pill in the “take a pill and lie down” directive thousands of Don Drapers gave their Bettys.

Of course, housewives have children who grow into curious teenagers, and medicine-cabinet explorations led the children of boomers to discover a new use for the drug. Most sedatives are designed to take you away within fifteen minutes, but—as Belfort explains in a lengthy paean to ’ludes—fighting the high leads one into a state almost universally described as euphoria. “It was hard to imagine how anything could feel better than this. Any problems you had were immediately forgotten or irrelevant,” said one person who came of age when ’ludes were still floating around. “Nothing felt like being on quaaludes except being on quaaludes.”

William James thought the world was made up of two halves: the healthy-minded, or those who could “avert one’s attention from evil, and live simply in the light of good … quite free of one’s melancholy self,” and the sick-souled, or morbid-minded, “grubbing in rat-holes instead of living in the light; with their manufacture of fears, and preoccupation with every unwholesome kind of misery, there is something almost obscene about these children of wrath.” In the end, to be of morbid mind is, according to James, the better option—the harsh realities the healthy-minded cheerily repel “may after all be the best key to life’s significance, and possibly the only openers of our eyes to the deepest levels of truth.” Still, it’s not easy, being a sick soul. James is one of the first persons to pop up in a search of “neurasthenia,” the catch-all term for those who suffered from nervousness, exhaustion, and overthinking in the nineteenth century.

Maybe William James needed a quiet interlude. Maybe something like a quaalude, something that makes you feel like yourself without any of the stress of actually being yourself, can be, for a healthy mind looking to spice up a Saturday night, something that enhances dancing and drinking and sex and honesty. But for someone like Jordan Belfort—whose desires beget more desires until he isn’t sure whether they’re real or if he’s wanting just to want—quaaludes were probably more an occupational necessity than a recreational getaway.

by Angela Serratore, Paris Review | Read more:

Image: The Quaaludes featuring the DT’s album cover, 2011.

Friday, April 4, 2014

Invisible World, Invisible Saviors

Ecologists have exemplified this tension between the macro and the micro of biology. For more than 60 years, ecologists have been interested in understanding how the biodiversity within different ecosystems is determined. Throughout the twentieth century, the number of plant and animal species was viewed as the primary metric of biodiversity. Investigators identified a number of variables that influenced species richness, including climate, the heterogeneity of habitats within the ecosystem, and the abundance of solar radiation. Rain forests support lots of species because their climate is relatively uniform throughout the year, the trees and shrubs create an abundance of distinct habitats, and the sun shines year round. The stability of the ecosystem is another significant consideration. Some tropical forests are so old that evolution has had time to birth many of their younger species. (...)

By adding microbes to the public discourse we may get closer to comprehending the real workings of the biosphere and the growing threat to their perpetuation. Interest and indifference to conserving different species shows an extraordinary bias in favor of animals with juvenile facial features, “warm” coloration, “endearing” behavior (fur helps too), and other characteristics that appeal to our innate and cultural preferences. The level of discrimination is surprising. Lion cubs have almost universal appeal, and it must take a lifetime of horrors to numb someone to the charms of a baby orangutan. But we make subconscious rankings of animals of every stripe. Among penguins, for example, we prefer species with bright yellow or red feathers. The charismatic megafauna are very distracting, and the popularization of microbial beauty will require a shift in thinking, a subtlety of news coverage, a new genre of wildlife documentary. The ethical responsibility lies with the nations that are engaged in modern biology. (...)

Knowledge of the gut microbiome changes the balance a little. Our highly bacterial nature seems significant to me in an emotional sense. I’m captivated by the revelation that my breakfast feeds the 100 trillion bacteria and archaea in my colon, and that they feed me with short-chain fatty acids. I’m thrilled by the fact that I am farmed by my microbes as much as I cultivate them, that bacteria modulate my physical and mental well-being, and that my microbes are programmed to eat me from the inside out as soon as my heart stops delivering oxygenated blood to my gut. My bacteria will die too, but only following a very fatty last supper. It is tempting to say that the gut microbiome lives and dies with us, but this distinction between organisms is inadequate: our lives are inseparable from the get-go. The more we learn about the theater of our peristaltic cylinder, the more we lose the illusion of control. We carry the microbes around and feed them; they deliver the power that allows us to do so.

Viewed with some philosophical introspection, microbial biology should stimulate a feeling of uneasiness about the meaning of our species and the importance of the individual. But there is boundless opportunity to feel elevated by this science. There are worse fates than to be our kind of farmed animal.

by Nicolas P. Money, Salon | Read more:

Image: AP/Agriculture DepartmentWe’re Creating a New Category of Being

One of the unexpected pleasures of modern parenthood is eavesdropping on your ten-year-old as she conducts existential conversations with an iPhone. “Who are you, Siri?” “What is the meaning of life?” Pride becomes bemusement, though, as the questions degenerate into abuse. “Siri, you’re stupid!” Siri’s unruffled response—“I’m sorry you feel that way”—provokes “Siri, you’re fired!”

I don’t think of my daughter as petulant. Friends tell me they’ve watched their children go through the same love, then hate, for digital personal assistants. Siri’s repertoire of bon mots is limited, and she can be slow to understand seemingly straightforward commands, such as, “Send e-mail to Hannah.” (“Uh oh, something’s gone wrong.”) Worse, from a child’s point of view, she rebuffs stabs at intimacy: Ask her if she loves you, and after deflecting the question a few times (“Awk-ward,” “Do I what?”) she admits: “I’m not capable of love.” Earlier this year, a mother wrote to Philip Galanes, the “Social Q’s” columnist for The New York Times, asking him what to do when her ten-year-old son called Siri a “stupid idiot.” Stop him, said Galanes; the vituperation of virtual pals amounts to a “dry run” for hurling insults at people. His answer struck me as clueless: Children yell at toys all the time, whether talking or dumb. It’s how they work through their aggression.

I don’t think of my daughter as petulant. Friends tell me they’ve watched their children go through the same love, then hate, for digital personal assistants. Siri’s repertoire of bon mots is limited, and she can be slow to understand seemingly straightforward commands, such as, “Send e-mail to Hannah.” (“Uh oh, something’s gone wrong.”) Worse, from a child’s point of view, she rebuffs stabs at intimacy: Ask her if she loves you, and after deflecting the question a few times (“Awk-ward,” “Do I what?”) she admits: “I’m not capable of love.” Earlier this year, a mother wrote to Philip Galanes, the “Social Q’s” columnist for The New York Times, asking him what to do when her ten-year-old son called Siri a “stupid idiot.” Stop him, said Galanes; the vituperation of virtual pals amounts to a “dry run” for hurling insults at people. His answer struck me as clueless: Children yell at toys all the time, whether talking or dumb. It’s how they work through their aggression.

Siri will get smarter, though, and more companionable, because conversational agents are almost certain to become the user interface of the future. They’re already close to ubiquitous. Google has had its own digital personal assistant, Google Voice Search, since 2008. Siri will soon be available in Ford, Toyota, and General Motors cars. As this magazine goes to press, Microsoft is unveiling its own version of Siri, code-named Cortana (the brilliant, babelicious hologram in Microsoft’s Halo video game). Voice activation is the easiest method of controlling the smart devices—refrigerators, toilets, lights, elevators, robotic servants—that will soon populate our environment. All the more reason, then, to understand why children can’t stop trying to make friends with these voices. Think of our children as less inhibited avatars of ourselves. It is through them that we’ll learn what it will be like to live in a world crowded with “friends” like Siri.

The wonderment is that Siri has any emotional pull at all, given her many limitations. Some of her appeal can be chalked up to novelty. But she has another, more fundamental attraction: her voice. Voice is a more visceral medium than text. A child first comes to know his mother through her voice, which he recognizes as distinctively hers while still in the womb. Moreover, the disembodied voice unleashes fantasies and projections that the embodied voice somehow keeps in check. That’s why Freud sat psychoanalysts behind their patients. It’s also why phone sex can be so intense.

I don’t think of my daughter as petulant. Friends tell me they’ve watched their children go through the same love, then hate, for digital personal assistants. Siri’s repertoire of bon mots is limited, and she can be slow to understand seemingly straightforward commands, such as, “Send e-mail to Hannah.” (“Uh oh, something’s gone wrong.”) Worse, from a child’s point of view, she rebuffs stabs at intimacy: Ask her if she loves you, and after deflecting the question a few times (“Awk-ward,” “Do I what?”) she admits: “I’m not capable of love.” Earlier this year, a mother wrote to Philip Galanes, the “Social Q’s” columnist for The New York Times, asking him what to do when her ten-year-old son called Siri a “stupid idiot.” Stop him, said Galanes; the vituperation of virtual pals amounts to a “dry run” for hurling insults at people. His answer struck me as clueless: Children yell at toys all the time, whether talking or dumb. It’s how they work through their aggression.

I don’t think of my daughter as petulant. Friends tell me they’ve watched their children go through the same love, then hate, for digital personal assistants. Siri’s repertoire of bon mots is limited, and she can be slow to understand seemingly straightforward commands, such as, “Send e-mail to Hannah.” (“Uh oh, something’s gone wrong.”) Worse, from a child’s point of view, she rebuffs stabs at intimacy: Ask her if she loves you, and after deflecting the question a few times (“Awk-ward,” “Do I what?”) she admits: “I’m not capable of love.” Earlier this year, a mother wrote to Philip Galanes, the “Social Q’s” columnist for The New York Times, asking him what to do when her ten-year-old son called Siri a “stupid idiot.” Stop him, said Galanes; the vituperation of virtual pals amounts to a “dry run” for hurling insults at people. His answer struck me as clueless: Children yell at toys all the time, whether talking or dumb. It’s how they work through their aggression.Siri will get smarter, though, and more companionable, because conversational agents are almost certain to become the user interface of the future. They’re already close to ubiquitous. Google has had its own digital personal assistant, Google Voice Search, since 2008. Siri will soon be available in Ford, Toyota, and General Motors cars. As this magazine goes to press, Microsoft is unveiling its own version of Siri, code-named Cortana (the brilliant, babelicious hologram in Microsoft’s Halo video game). Voice activation is the easiest method of controlling the smart devices—refrigerators, toilets, lights, elevators, robotic servants—that will soon populate our environment. All the more reason, then, to understand why children can’t stop trying to make friends with these voices. Think of our children as less inhibited avatars of ourselves. It is through them that we’ll learn what it will be like to live in a world crowded with “friends” like Siri.

The wonderment is that Siri has any emotional pull at all, given her many limitations. Some of her appeal can be chalked up to novelty. But she has another, more fundamental attraction: her voice. Voice is a more visceral medium than text. A child first comes to know his mother through her voice, which he recognizes as distinctively hers while still in the womb. Moreover, the disembodied voice unleashes fantasies and projections that the embodied voice somehow keeps in check. That’s why Freud sat psychoanalysts behind their patients. It’s also why phone sex can be so intense.

by Judith Shulevitz, New Republic | Read more:

Image: uncredited

Subscribe to:

Posts (Atom)