Here are two things to know about architects. First, they are fastidious and inventive with their names. Frank Lincoln Wright was never, unlike Sinatra, a Francis. He swapped in the Lloyd when he was 18—ostensibly in tribute to his mother’s surname on the occasion of her divorce, but also to avoid carrying around the name of a still more famous man, and for that nice three-beat meter, in full anticipation of seeing his full name in print. In 1917, Charles-Edouard Jeanneret-Gris—who is to modern architecture what Freud is to psychoanalysis—was given the byline Le Corbusier (after corbeau, crow) by his editor at a small journal, so that he could anonymously review his own buildings. The success of the sock puppet critic meant that after the critiques were collected into a book-length manifesto, the nom-de-plume eventually took over Jeanneret-Gris’ architect persona, as well. Ludwig Mies—the inventor of the glass-walled skyscraper—inherited an unfortunate surname that doubled as a German epithet for anything lousy or similarly defiled. He restyled himself Miës van der Rohe—vowel-bending heavy-metal umlaut and all—with the Dutch geographical tussenvoegsel “van” from his mother’s maiden name to add a simulation of the German nobiliary particle, von. Ephraim Owen Goldberg became Frank Gehry.

Second, all architects are older than you think. Or than they want you to think. Unlike the closely adjacent fields of music and mathematics, architecture has no prodigies. Design and construction take time. At 40, an architect is just starting out. Dying at 72 in architecture is like dying at 27 in rock and roll. The body of knowledge required is broad and intricate, philosophical and practical, and the training is long. The American Institute of Architects, a self-appointed enforcer of the title, requires five to seven years of school, then years more of closely monitored internships, and a further year of exams to confer the word architect on its dues-payers. This has formalized a longstanding tradition in which, architecture schools being rare (for a long time the Paris Académie des Beaux-Arts and the Massachusetts Institute of Technology were your choices), it wasn’t unusual for would-be architects to lose a decade in a kind of autodidactical twilight among artists and builders, before commencing their practice. Even now, architects start late. And they never stop, working well into their eighties and nineties, or famously in the case of Brazilian midcentury master Oscar Niemeyer, their hundreds. This is why the life story of a very different midcentury master, the Philadelphian Louis Kahn, who got started only around age 50 and died at around 70—is, among other things, a tragedy. A student once asked Kahn why it took so long to become an architect. “Why not,” he answered, “you want to die earlier?”

Second, all architects are older than you think. Or than they want you to think. Unlike the closely adjacent fields of music and mathematics, architecture has no prodigies. Design and construction take time. At 40, an architect is just starting out. Dying at 72 in architecture is like dying at 27 in rock and roll. The body of knowledge required is broad and intricate, philosophical and practical, and the training is long. The American Institute of Architects, a self-appointed enforcer of the title, requires five to seven years of school, then years more of closely monitored internships, and a further year of exams to confer the word architect on its dues-payers. This has formalized a longstanding tradition in which, architecture schools being rare (for a long time the Paris Académie des Beaux-Arts and the Massachusetts Institute of Technology were your choices), it wasn’t unusual for would-be architects to lose a decade in a kind of autodidactical twilight among artists and builders, before commencing their practice. Even now, architects start late. And they never stop, working well into their eighties and nineties, or famously in the case of Brazilian midcentury master Oscar Niemeyer, their hundreds. This is why the life story of a very different midcentury master, the Philadelphian Louis Kahn, who got started only around age 50 and died at around 70—is, among other things, a tragedy. A student once asked Kahn why it took so long to become an architect. “Why not,” he answered, “you want to die earlier?”

These matters of name and age reflect the social uncertainty and financial precarity of the profession. Prominent architects, from Palladio to Mies, were sons of stonemasons who jumped up socially thanks to gentleman patrons. The class ambiguity persists to this day; the architecture studios I teach are full of people who are the first in their families to enter any of the professions. Or are the opposite: would-be bohemian artiste children of first-generation professionals who have compromised with their elders. These exchanges of capital and class, style and status, are complicated: ever since the upstart Medici family employed Giorgio Vasari to put up pageants and palaces to substitute for pedigree, the ornamental company of architects—though themselves only tradesmen and servants—has conferred a touch of the very class to which architects also aspire. The slow and resource-rich making of buildings is impossible without the patronage of invested clients. Architecture, like certain kinds of filmmaking, is an art of spending a lot of other people’s money: a successful architect, said the teacher of the single business class my design school obliged me to take, should be the poorest person in any room. Architects, relieved just to build, work for a tiny fractional fee of projects’ construction costs. And, pleased to imagine themselves worldly, they work without managers and agents. The hours are long. The pay is bad. When Kahn died, his firm—slow-rolling chaos held together by a long-suffering Quaker deputy named David Wisdom—owed its creditors $464,423.83. In 1974 dollars.

Wendy Lesser, in her monumental new biography of Kahn, You Say to Brick, chooses to call her subject, for the most part, Lou. As in Reed, Gehrig, and Costello—a name that connotes a kind of nostalgic American working-class heroism. Lesser recounts how Kahn disarmed a colleague, who was inclined to forever call him Professor, by saying, “In the office, everyone calls me Lou.” Wendy understandably follows that lead, but for me, her Lou, Lou, Lou rings like a cowbell: like a life of the prophet Isaiah that calls its subject Izzy. Within the small world of architecture, a self-regarding world that guards its heroes to a fault—a world where Kahn is almost alone in being almost universally revered (even by those who think of his work as a terminally perfected dead end)—the only people who say “Lou” are the dwindling ranks of his former students. But even they all seem to say Lou-Kahn, one word, like lupin or Lacan. To the rest of us he has become that second single syllable, whose long open vowel—sound of submission and satisfaction—echoes in its evocation of preeminence the exotic imperial honorific, Khan.

by Thomas De Monchaux, N+1 | Read more:

Second, all architects are older than you think. Or than they want you to think. Unlike the closely adjacent fields of music and mathematics, architecture has no prodigies. Design and construction take time. At 40, an architect is just starting out. Dying at 72 in architecture is like dying at 27 in rock and roll. The body of knowledge required is broad and intricate, philosophical and practical, and the training is long. The American Institute of Architects, a self-appointed enforcer of the title, requires five to seven years of school, then years more of closely monitored internships, and a further year of exams to confer the word architect on its dues-payers. This has formalized a longstanding tradition in which, architecture schools being rare (for a long time the Paris Académie des Beaux-Arts and the Massachusetts Institute of Technology were your choices), it wasn’t unusual for would-be architects to lose a decade in a kind of autodidactical twilight among artists and builders, before commencing their practice. Even now, architects start late. And they never stop, working well into their eighties and nineties, or famously in the case of Brazilian midcentury master Oscar Niemeyer, their hundreds. This is why the life story of a very different midcentury master, the Philadelphian Louis Kahn, who got started only around age 50 and died at around 70—is, among other things, a tragedy. A student once asked Kahn why it took so long to become an architect. “Why not,” he answered, “you want to die earlier?”

Second, all architects are older than you think. Or than they want you to think. Unlike the closely adjacent fields of music and mathematics, architecture has no prodigies. Design and construction take time. At 40, an architect is just starting out. Dying at 72 in architecture is like dying at 27 in rock and roll. The body of knowledge required is broad and intricate, philosophical and practical, and the training is long. The American Institute of Architects, a self-appointed enforcer of the title, requires five to seven years of school, then years more of closely monitored internships, and a further year of exams to confer the word architect on its dues-payers. This has formalized a longstanding tradition in which, architecture schools being rare (for a long time the Paris Académie des Beaux-Arts and the Massachusetts Institute of Technology were your choices), it wasn’t unusual for would-be architects to lose a decade in a kind of autodidactical twilight among artists and builders, before commencing their practice. Even now, architects start late. And they never stop, working well into their eighties and nineties, or famously in the case of Brazilian midcentury master Oscar Niemeyer, their hundreds. This is why the life story of a very different midcentury master, the Philadelphian Louis Kahn, who got started only around age 50 and died at around 70—is, among other things, a tragedy. A student once asked Kahn why it took so long to become an architect. “Why not,” he answered, “you want to die earlier?”These matters of name and age reflect the social uncertainty and financial precarity of the profession. Prominent architects, from Palladio to Mies, were sons of stonemasons who jumped up socially thanks to gentleman patrons. The class ambiguity persists to this day; the architecture studios I teach are full of people who are the first in their families to enter any of the professions. Or are the opposite: would-be bohemian artiste children of first-generation professionals who have compromised with their elders. These exchanges of capital and class, style and status, are complicated: ever since the upstart Medici family employed Giorgio Vasari to put up pageants and palaces to substitute for pedigree, the ornamental company of architects—though themselves only tradesmen and servants—has conferred a touch of the very class to which architects also aspire. The slow and resource-rich making of buildings is impossible without the patronage of invested clients. Architecture, like certain kinds of filmmaking, is an art of spending a lot of other people’s money: a successful architect, said the teacher of the single business class my design school obliged me to take, should be the poorest person in any room. Architects, relieved just to build, work for a tiny fractional fee of projects’ construction costs. And, pleased to imagine themselves worldly, they work without managers and agents. The hours are long. The pay is bad. When Kahn died, his firm—slow-rolling chaos held together by a long-suffering Quaker deputy named David Wisdom—owed its creditors $464,423.83. In 1974 dollars.

Wendy Lesser, in her monumental new biography of Kahn, You Say to Brick, chooses to call her subject, for the most part, Lou. As in Reed, Gehrig, and Costello—a name that connotes a kind of nostalgic American working-class heroism. Lesser recounts how Kahn disarmed a colleague, who was inclined to forever call him Professor, by saying, “In the office, everyone calls me Lou.” Wendy understandably follows that lead, but for me, her Lou, Lou, Lou rings like a cowbell: like a life of the prophet Isaiah that calls its subject Izzy. Within the small world of architecture, a self-regarding world that guards its heroes to a fault—a world where Kahn is almost alone in being almost universally revered (even by those who think of his work as a terminally perfected dead end)—the only people who say “Lou” are the dwindling ranks of his former students. But even they all seem to say Lou-Kahn, one word, like lupin or Lacan. To the rest of us he has become that second single syllable, whose long open vowel—sound of submission and satisfaction—echoes in its evocation of preeminence the exotic imperial honorific, Khan.

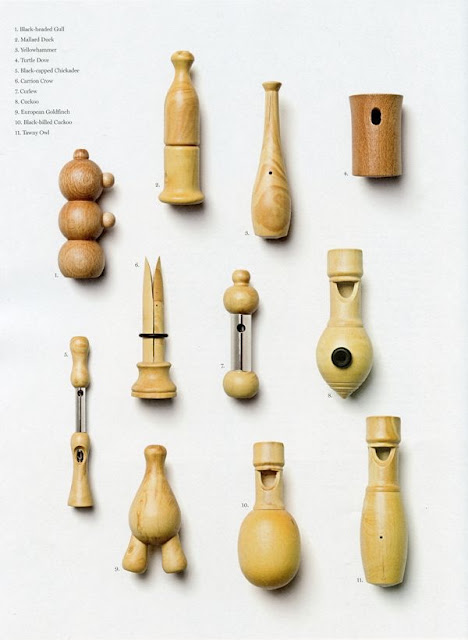

Image:Gunnar Klack