[ed. This is like my worst nightmare. There's also another version, narrated with Marshawn Lynch's epic Beast Quake run in 2011, which adds a little humor and helps dissipate the scariness a bit. Watch at your own (mental health) risk.]

Friday, December 9, 2016

Thursday, December 8, 2016

Who Would Destroy the World?

Consider a seemingly simple question: If the means were available, who exactly would destroy the world? There is surprisingly little discussion of this question within the nascent field of existential risk studies. But the issue of “agential risks” is critical: What sort of agent would either intentionally or accidentally cause an existential catastrophe?

An existential risk is any future event that would either permanently compromise our species’ potential for advancement or cause our extinction. Oxford philosopher Nick Bostrom coined the term in 2002, but the concept dates back to the end of World War II, when self-annihilation became a real possibility for the first time in human history.

In the past 15 years, the concept of an existential risk has received growing attention from scholars in a wide range of fields. And for good reason: An existential catastrophe could only happen once in our history. This raises the stakes immensely, and it means that reacting to existential risks won’t work. Humanity must anticipate such risks to avoid them.

In the past 15 years, the concept of an existential risk has received growing attention from scholars in a wide range of fields. And for good reason: An existential catastrophe could only happen once in our history. This raises the stakes immensely, and it means that reacting to existential risks won’t work. Humanity must anticipate such risks to avoid them.

So far, existential risk studies has focused mostly on the technologies—such as nuclear weapons and genetic engineering—that future agents could use to bring about a catastrophe. Scholars have said little about the types of agents who might actually deploy these technologies, either on purpose or by accident. This is a problematic gap in the literature, because agents matter just as much as, or perhaps even more than, potentially dangerous advanced technologies. They could be a bigger factor than the number of weapons of total destruction in the world.

Agents matter. To illustrate this point, consider the “two worlds” thought experiment: In world A, one finds many different kinds of weapons that are powerful enough to destroy the world, and virtually every citizen has access to them. Compare this with world B, in which there exists only a single weapon, and it is accessible to only one-fourth of the population. Which world would you rather live in? If you focus only on the technology, then world B is clearly safer.

Imagine, though, that world A is populated by peaceniks, while world B is populated by psychopaths. Now which world would you rather live in? Even though world A has more weapons, and greater access to them, world B is a riskier place to live. The moral is this: To accurately assess the overall probability of risk, as some scholars have attempted to do, it’s important to consider both sides of the agent-tool coupling.

Studying agents might seem somewhat trivial, especially for those with a background in science and technology. Humans haven’t changed much in the past 30,000 years, and we’re unlikely to evolve new traits in the coming decades, whereas the technologies available to us have changed dramatically. This makes studying the latter much more important. Nevertheless, studying the human side of the equation can suggest new ways to mitigate risk.

Agents of terror. “Terrorists,” “rogue states,” “psychopaths,” “malicious actors,” and so on—these are frequently lumped together by existential risk scholars without further elaboration. When one takes a closer look, though, one discovers important and sometimes surprising differences between various types of agents. For example, most terrorists would be unlikely to intentionally cause an existential catastrophe. Why? Because the goals of most terrorists—who are typically motivated by nationalist, separatist, anarchist, Marxist, or other political ideologies—are predicated on the continued existence of the human species.

The Irish Republican Army, for example, would obstruct its own goal of reclaiming Northern Ireland if it were to dismantle global society or annihilate humanity. Similarly, if the Islamic State were to use weapons of total destruction against its enemies, doing so would interfere with its vision for Muslim control of the Middle East.

The same could be said about most states. For example, North Korea’s leaders may harbor fantasies of world domination, and the regime could decide that launching nuclear missiles at the West would help achieve this goal. But insofar as North Korea is a rational actor, it is unlikely to initiate an all-out nuclear exchange, because this could produce a nuclear winter leading to global agricultural failures, which would negatively impact the regime’s ability to maintain control over large territories.

On the other hand, there are some types of agents that might only pose a danger after world-destroying technologies become widely available—but not otherwise. Consider the case of negative utilitarians. Individuals who subscribe to this view believe that the ultimate aim of moral conduct is to minimize the total suffering in the universe. As the Scottish philosopher R. N. Smart pointed out in a 1958 paper, the problem with this view is that it seems to call for the destruction of humanity. After all, if there are no humans around to suffer, there can be no human suffering. Negative utilitarianism—or at least some versions of it—suggests that the most ethical actor would be a “world-exploder.”

As powerful weapons become increasingly accessible to small groups and individuals, negative utilitarians could emerge as a threat to human survival. Other types of agents that could become major hazards in the future are apocalyptic terrorists (fanatics who believe that the world must be destroyed to be saved), future ecoterrorists (in particular, those who see human extinction as necessary to save the biosphere), idiosyncratic agents (individuals, such as school shooters, who simply want to kill as many people as possible before dying), and machine superintelligence.

Superintelligence has received considerable attention in the past few years, but it’s important for scholars and governments alike to recognize that there are human agents who could also bring about a catastrophe. Scholars should not succumb to the “hardware bias” that has so far led them to focus exclusively on superintelligent machines.

by Phil Torres, Bulletin of the Atomic Scientists | Read more:

Image: Dr. Strangelove

An existential risk is any future event that would either permanently compromise our species’ potential for advancement or cause our extinction. Oxford philosopher Nick Bostrom coined the term in 2002, but the concept dates back to the end of World War II, when self-annihilation became a real possibility for the first time in human history.

In the past 15 years, the concept of an existential risk has received growing attention from scholars in a wide range of fields. And for good reason: An existential catastrophe could only happen once in our history. This raises the stakes immensely, and it means that reacting to existential risks won’t work. Humanity must anticipate such risks to avoid them.

In the past 15 years, the concept of an existential risk has received growing attention from scholars in a wide range of fields. And for good reason: An existential catastrophe could only happen once in our history. This raises the stakes immensely, and it means that reacting to existential risks won’t work. Humanity must anticipate such risks to avoid them.So far, existential risk studies has focused mostly on the technologies—such as nuclear weapons and genetic engineering—that future agents could use to bring about a catastrophe. Scholars have said little about the types of agents who might actually deploy these technologies, either on purpose or by accident. This is a problematic gap in the literature, because agents matter just as much as, or perhaps even more than, potentially dangerous advanced technologies. They could be a bigger factor than the number of weapons of total destruction in the world.

Agents matter. To illustrate this point, consider the “two worlds” thought experiment: In world A, one finds many different kinds of weapons that are powerful enough to destroy the world, and virtually every citizen has access to them. Compare this with world B, in which there exists only a single weapon, and it is accessible to only one-fourth of the population. Which world would you rather live in? If you focus only on the technology, then world B is clearly safer.

Imagine, though, that world A is populated by peaceniks, while world B is populated by psychopaths. Now which world would you rather live in? Even though world A has more weapons, and greater access to them, world B is a riskier place to live. The moral is this: To accurately assess the overall probability of risk, as some scholars have attempted to do, it’s important to consider both sides of the agent-tool coupling.

Studying agents might seem somewhat trivial, especially for those with a background in science and technology. Humans haven’t changed much in the past 30,000 years, and we’re unlikely to evolve new traits in the coming decades, whereas the technologies available to us have changed dramatically. This makes studying the latter much more important. Nevertheless, studying the human side of the equation can suggest new ways to mitigate risk.

Agents of terror. “Terrorists,” “rogue states,” “psychopaths,” “malicious actors,” and so on—these are frequently lumped together by existential risk scholars without further elaboration. When one takes a closer look, though, one discovers important and sometimes surprising differences between various types of agents. For example, most terrorists would be unlikely to intentionally cause an existential catastrophe. Why? Because the goals of most terrorists—who are typically motivated by nationalist, separatist, anarchist, Marxist, or other political ideologies—are predicated on the continued existence of the human species.

The Irish Republican Army, for example, would obstruct its own goal of reclaiming Northern Ireland if it were to dismantle global society or annihilate humanity. Similarly, if the Islamic State were to use weapons of total destruction against its enemies, doing so would interfere with its vision for Muslim control of the Middle East.

The same could be said about most states. For example, North Korea’s leaders may harbor fantasies of world domination, and the regime could decide that launching nuclear missiles at the West would help achieve this goal. But insofar as North Korea is a rational actor, it is unlikely to initiate an all-out nuclear exchange, because this could produce a nuclear winter leading to global agricultural failures, which would negatively impact the regime’s ability to maintain control over large territories.

On the other hand, there are some types of agents that might only pose a danger after world-destroying technologies become widely available—but not otherwise. Consider the case of negative utilitarians. Individuals who subscribe to this view believe that the ultimate aim of moral conduct is to minimize the total suffering in the universe. As the Scottish philosopher R. N. Smart pointed out in a 1958 paper, the problem with this view is that it seems to call for the destruction of humanity. After all, if there are no humans around to suffer, there can be no human suffering. Negative utilitarianism—or at least some versions of it—suggests that the most ethical actor would be a “world-exploder.”

As powerful weapons become increasingly accessible to small groups and individuals, negative utilitarians could emerge as a threat to human survival. Other types of agents that could become major hazards in the future are apocalyptic terrorists (fanatics who believe that the world must be destroyed to be saved), future ecoterrorists (in particular, those who see human extinction as necessary to save the biosphere), idiosyncratic agents (individuals, such as school shooters, who simply want to kill as many people as possible before dying), and machine superintelligence.

Superintelligence has received considerable attention in the past few years, but it’s important for scholars and governments alike to recognize that there are human agents who could also bring about a catastrophe. Scholars should not succumb to the “hardware bias” that has so far led them to focus exclusively on superintelligent machines.

by Phil Torres, Bulletin of the Atomic Scientists | Read more:

Image: Dr. Strangelove

Labels:

Critical Thought,

Military,

Politics,

Security

Starbucks's $10 Cup of Coffee Is Priced Just Right

[ed. I've been in the Roastery in Seattle on Pike (although I didn't know it at the time). I'd just stopped in to get a Serious Pie pizza, which, as I found out, is situated in a small enclave adjacent to the main dining room. The whole operation looked like a brewery to me... massive roasting equipment, drying bins, conveyor-belts running all over the place and other stuff going on while hundreds of people sat around drinking coffee, working on laptops, taking pictures and just soaking in the vibe (and tourists pouring in all the time). It was, generally, kind of weird. I kept asking myself "what is everyone doing here?"(I'm not much of a coffee drinker myself), but people seemed to be outright giddy about being in the happening place.]

It's not about the $10 cup of coffee.

Critics have derided Starbucks Corp.'s push into higher-end coffee bars, which the chain discussed in detail on Thursday, at an all-day confab with Wall Street investors and analysts. Skeptics questioned whether millennials would pony up for pricey drinks such as the $10 Nitro cold-brew coffee, which is infused with nitrogen gas. Others poked fun at descriptions of exotic beans small-batch roasted in Seattle.

They are missing the point.

Starbucks is not trying to change its current business model by going even more upmarket than it already is, nor is it trying to convince folks to spend more on its run-of-the-mill drip coffee just because it's served by hipsters in hats and leather-lined cloth aprons (the new uniform of the higher-end Roastery stores).

Starbucks is not trying to change its current business model by going even more upmarket than it already is, nor is it trying to convince folks to spend more on its run-of-the-mill drip coffee just because it's served by hipsters in hats and leather-lined cloth aprons (the new uniform of the higher-end Roastery stores).

On the contrary, Starbucks has launched a completely separate, brand new restaurant chain -- one that rejects the old model of brick-and-mortar ubiquity, while also getting back to the chain's roots.

Back in the 1970s, CEO Howard Schultz fashioned Starbucks in the vision of a "third place"-- corporate jargon for somewhere for people to hang out when not at work or home. But over the years, many of the 25,000 stores worldwide have basically turned into fast-food stations, where people get their coffee fix and get out.

Store Count

Starbucks aims to get up to 37,000 stores by 2021, which would overtake McDonald's store footprint

Many of the stores don't look all that different from McDonald's higher-end McCafe's. Starbucks has invested in drive-thru's, mobile ordering and payment and virtual baristas, to speed up the process and get more money flowing through the chain.

The problem is, this model depends greatly on foot traffic. And with consumers spending increasingly less time at the malls and shopping centers Starbucks locations were built to serve, the company knows it can no longer rely on the "pull" model of intercepting existing foot traffic. Instead, it will have to create more of a "push" model, giving consumers reasons to get off their couch and come in to get a coffee.

It's not about the $10 cup of coffee.

Critics have derided Starbucks Corp.'s push into higher-end coffee bars, which the chain discussed in detail on Thursday, at an all-day confab with Wall Street investors and analysts. Skeptics questioned whether millennials would pony up for pricey drinks such as the $10 Nitro cold-brew coffee, which is infused with nitrogen gas. Others poked fun at descriptions of exotic beans small-batch roasted in Seattle.

They are missing the point.

Starbucks is not trying to change its current business model by going even more upmarket than it already is, nor is it trying to convince folks to spend more on its run-of-the-mill drip coffee just because it's served by hipsters in hats and leather-lined cloth aprons (the new uniform of the higher-end Roastery stores).

Starbucks is not trying to change its current business model by going even more upmarket than it already is, nor is it trying to convince folks to spend more on its run-of-the-mill drip coffee just because it's served by hipsters in hats and leather-lined cloth aprons (the new uniform of the higher-end Roastery stores).On the contrary, Starbucks has launched a completely separate, brand new restaurant chain -- one that rejects the old model of brick-and-mortar ubiquity, while also getting back to the chain's roots.

Back in the 1970s, CEO Howard Schultz fashioned Starbucks in the vision of a "third place"-- corporate jargon for somewhere for people to hang out when not at work or home. But over the years, many of the 25,000 stores worldwide have basically turned into fast-food stations, where people get their coffee fix and get out.

Store Count

Starbucks aims to get up to 37,000 stores by 2021, which would overtake McDonald's store footprint

Many of the stores don't look all that different from McDonald's higher-end McCafe's. Starbucks has invested in drive-thru's, mobile ordering and payment and virtual baristas, to speed up the process and get more money flowing through the chain.

The problem is, this model depends greatly on foot traffic. And with consumers spending increasingly less time at the malls and shopping centers Starbucks locations were built to serve, the company knows it can no longer rely on the "pull" model of intercepting existing foot traffic. Instead, it will have to create more of a "push" model, giving consumers reasons to get off their couch and come in to get a coffee.

Traffic Jam

Shopper traffic at retail stores is in a steep, years-long decline

Enter Starbucks Reserve and Roastery stores. They are more wine bar than coffeehouse, replete with tastings, mixologists and educated baristas. They're a place to take a date, to sit at the bar and experience the tastes and smells, as you'd do at a wine bar or craft brewery.

Indeed, customers at the already opened Roastery outpost in Seattle spend an average of 40 minutes at the restaurant, according to the company. Compare that to the few minutes it takes to swing by a Starbucks on your morning commute and pick up the vanilla latte you pre-ordered on your cell phone. It's a totally different business model.

Enter Starbucks Reserve and Roastery stores. They are more wine bar than coffeehouse, replete with tastings, mixologists and educated baristas. They're a place to take a date, to sit at the bar and experience the tastes and smells, as you'd do at a wine bar or craft brewery.

Indeed, customers at the already opened Roastery outpost in Seattle spend an average of 40 minutes at the restaurant, according to the company. Compare that to the few minutes it takes to swing by a Starbucks on your morning commute and pick up the vanilla latte you pre-ordered on your cell phone. It's a totally different business model.

by Shelly Banjo, Bloomberg | Read more:

Image: via:

Okinawa Churaumi Aquarium

Wednesday, December 7, 2016

The True Story of America’s Sky-High Prescription Drug Prices

Let’s say you’re at the doctor. And the doctor hands you a prescription.

The prescription is for Humira, an injectable medication used to treat a lot of common conditions like arthritis and psoriasis. Humira is an especially popular medication right now. In 2015, patients all around the world spent $14 billion on Humira prescriptions — that’s roughly the size of Jamaica's entire economy.

Let’s say your doctor appointment is happening in the United Kingdom. There, your Humira prescription will cost, on average, $1,362. If you’re seeing a doctor in Switzerland, the drug runs around $822.

Let’s say your doctor appointment is happening in the United Kingdom. There, your Humira prescription will cost, on average, $1,362. If you’re seeing a doctor in Switzerland, the drug runs around $822.

But if you’re seeing a doctor in the United States, your Humira prescription will, on average, run you $2,669.

How does this happen? Why does Humira cost so much more here than it does in other countries?

Humira is the exact same drug whether it’s sold in the United States, in Switzerland, or anywhere else. What’s different about Humira in the United States is the regulatory system we’ve set up around our pharmaceutical industry.

The United States is exceptional in that it does not regulate or negotiate the prices of new prescription drugs when they come onto market. Other countries will task a government agency to meet with pharmaceutical companies and haggle over an appropriate price. These agencies will typically make decisions about whether these new drugs represent any improvement over the old drugs — whether they’re even worth bringing onto the market in the first place. They’ll pore over reams of evidence about drugs’ risks and benefits.

The United States allows drugmakers to set their own prices for a given product — and allows every drug that's proven to be safe come onto market. And the problems that causes are easy to see, from the high copays at the drugstore to the people who can’t afford lifesaving medications.

What’s harder to see is that if we did lower drug prices, we would be making a trade-off. Lowering drug profits would make pharmaceuticals a less desirable industry for investors. And less investment in drugs would mean less research toward new and innovative cures.

There’s this analogy that Craig Garthwaite, a health economist, gave me that helped make this clear. Think about a venture capitalist who is deciding whether to invest $10 million in a social media app or a cure for pancreatic cancer.

“As you decrease the potential profits I’m going to make from pancreatic cures, I’m going to shift more of my investment over to apps or just keep the money in the bank and earn the money I make there,” Garthwaite says.

Right now America’s high drug prices mean that investing in pharmaceuticals can generate a whole bunch of profits — and that drugs can be too expensive for Americans to afford.

Let’s say you’re a pharmaceutical executive and you’ve discovered a new drug. And you want to sell it in Australia. Or Canada. Or Britain.

You’re going to want to start setting up some meetings with agencies that make decisions about drug coverage and prices.

These regulatory bodies generally evaluate two things: whether the country wants to buy your drug and, if so, how much they’ll pay for it. These decisions are often related, as regulators evaluate whether your new drug is enough of an improvement on whatever is already on the market to warrant a higher price.

So let’s say you want to sell your drug in Australia. You’ll have to submit an application to the Pharmaceutical Benefits Advisory Committee, where you’ll attempt to prove that your drug is more effective than whatever else is on the market right now.

The committee will then make a recommendation to the country’s national health care system of whether to buy the drug — and, if the recommendation is to buy it, the committee will suggest what price the health plan ought to pay.

Australia’s Pharmaceutical Benefits Advisory Committee is not easy to impress: It has rejected about half of the anti-cancer drug applications it received in the past decade because their benefits didn’t seem worth the price.

But if you do succeed — and Australia deems your drug worthy to cover — then you’ll have to decide whether the committee has offered a high enough price. If so, congrats! You’ve entered the Australian drug market.

Other countries regulate the price of drugs because they see them as a public utility

Countries like Australia, Canada, and Britain don’t regulate the price of other things that consumers buy, like computers or clothing. But they and dozens of other countries have made the decision to regulate the price of drugs to ensure that medical treatment remains affordable for all citizens, regardless of their income. Medication is treated differently because it is a good that some consumers, quite literally, can’t live without.

This decision comes with policy trade-offs, no doubt. Countries like Australia will often refuse to cover drugs that they don’t think are worth the price. In order for regulatory agencies to have leverage in negotiating with drugmakers, they have to be able to say no to the drugs they don’t think are up to snuff. This means certain drugs that sell in the United States aren’t available in other countries — and there are often public outcries when these agencies refuse to approve a given drug.

At the same time, just because there are more drugs on the American market, that doesn’t mean all patients can access them. “To think that patients have full access to a wide range of products isn’t right,” says Aaron Kesselheim, an associate professor of medicine at Harvard Medical School. “If the drugs are so expensive that you can’t afford them, that’s functionally the same thing as not even having them on the market.”

It also doesn’t mean we’re necessarily getting better treatment. Other countries’ regulatory agencies usually reject drugs when they don’t think they provide enough benefit to justify the price that drugmakers want to charge. In the United States, those drugs come onto market — which means we get expensive drugs that offer little additional benefit but might be especially good at marketing.

This happened in 2012 with a drug called Zaltrap, which treats colorectal cancer. The drug cost about $11,000 per month — twice as much as its competitors — while, in the eyes of doctors, offering no additional benefit.

“In most industries something that offers no advantage of its competitors and yet sells for twice the price would never even get on the market,” Peter Bach, an oncologist at Sloan-Kettering Memorial Hospital, wrote in a New York Times op-ed. “But that is not how things work for drugs. The Food and Drug Administration approves drugs if they are shown to be ‘safe and effective.’ It does not consider what the relative costs might be.”

by Sarah Kliff, Vox | Read more:

Image: uncredited

The prescription is for Humira, an injectable medication used to treat a lot of common conditions like arthritis and psoriasis. Humira is an especially popular medication right now. In 2015, patients all around the world spent $14 billion on Humira prescriptions — that’s roughly the size of Jamaica's entire economy.

Let’s say your doctor appointment is happening in the United Kingdom. There, your Humira prescription will cost, on average, $1,362. If you’re seeing a doctor in Switzerland, the drug runs around $822.

Let’s say your doctor appointment is happening in the United Kingdom. There, your Humira prescription will cost, on average, $1,362. If you’re seeing a doctor in Switzerland, the drug runs around $822.But if you’re seeing a doctor in the United States, your Humira prescription will, on average, run you $2,669.

How does this happen? Why does Humira cost so much more here than it does in other countries?

Humira is the exact same drug whether it’s sold in the United States, in Switzerland, or anywhere else. What’s different about Humira in the United States is the regulatory system we’ve set up around our pharmaceutical industry.

The United States is exceptional in that it does not regulate or negotiate the prices of new prescription drugs when they come onto market. Other countries will task a government agency to meet with pharmaceutical companies and haggle over an appropriate price. These agencies will typically make decisions about whether these new drugs represent any improvement over the old drugs — whether they’re even worth bringing onto the market in the first place. They’ll pore over reams of evidence about drugs’ risks and benefits.

The United States allows drugmakers to set their own prices for a given product — and allows every drug that's proven to be safe come onto market. And the problems that causes are easy to see, from the high copays at the drugstore to the people who can’t afford lifesaving medications.

What’s harder to see is that if we did lower drug prices, we would be making a trade-off. Lowering drug profits would make pharmaceuticals a less desirable industry for investors. And less investment in drugs would mean less research toward new and innovative cures.

There’s this analogy that Craig Garthwaite, a health economist, gave me that helped make this clear. Think about a venture capitalist who is deciding whether to invest $10 million in a social media app or a cure for pancreatic cancer.

“As you decrease the potential profits I’m going to make from pancreatic cures, I’m going to shift more of my investment over to apps or just keep the money in the bank and earn the money I make there,” Garthwaite says.

Right now America’s high drug prices mean that investing in pharmaceuticals can generate a whole bunch of profits — and that drugs can be too expensive for Americans to afford.

Let’s say you’re a pharmaceutical executive and you’ve discovered a new drug. And you want to sell it in Australia. Or Canada. Or Britain.

You’re going to want to start setting up some meetings with agencies that make decisions about drug coverage and prices.

These regulatory bodies generally evaluate two things: whether the country wants to buy your drug and, if so, how much they’ll pay for it. These decisions are often related, as regulators evaluate whether your new drug is enough of an improvement on whatever is already on the market to warrant a higher price.

So let’s say you want to sell your drug in Australia. You’ll have to submit an application to the Pharmaceutical Benefits Advisory Committee, where you’ll attempt to prove that your drug is more effective than whatever else is on the market right now.

The committee will then make a recommendation to the country’s national health care system of whether to buy the drug — and, if the recommendation is to buy it, the committee will suggest what price the health plan ought to pay.

Australia’s Pharmaceutical Benefits Advisory Committee is not easy to impress: It has rejected about half of the anti-cancer drug applications it received in the past decade because their benefits didn’t seem worth the price.

But if you do succeed — and Australia deems your drug worthy to cover — then you’ll have to decide whether the committee has offered a high enough price. If so, congrats! You’ve entered the Australian drug market.

Other countries regulate the price of drugs because they see them as a public utility

Countries like Australia, Canada, and Britain don’t regulate the price of other things that consumers buy, like computers or clothing. But they and dozens of other countries have made the decision to regulate the price of drugs to ensure that medical treatment remains affordable for all citizens, regardless of their income. Medication is treated differently because it is a good that some consumers, quite literally, can’t live without.

This decision comes with policy trade-offs, no doubt. Countries like Australia will often refuse to cover drugs that they don’t think are worth the price. In order for regulatory agencies to have leverage in negotiating with drugmakers, they have to be able to say no to the drugs they don’t think are up to snuff. This means certain drugs that sell in the United States aren’t available in other countries — and there are often public outcries when these agencies refuse to approve a given drug.

At the same time, just because there are more drugs on the American market, that doesn’t mean all patients can access them. “To think that patients have full access to a wide range of products isn’t right,” says Aaron Kesselheim, an associate professor of medicine at Harvard Medical School. “If the drugs are so expensive that you can’t afford them, that’s functionally the same thing as not even having them on the market.”

It also doesn’t mean we’re necessarily getting better treatment. Other countries’ regulatory agencies usually reject drugs when they don’t think they provide enough benefit to justify the price that drugmakers want to charge. In the United States, those drugs come onto market — which means we get expensive drugs that offer little additional benefit but might be especially good at marketing.

This happened in 2012 with a drug called Zaltrap, which treats colorectal cancer. The drug cost about $11,000 per month — twice as much as its competitors — while, in the eyes of doctors, offering no additional benefit.

“In most industries something that offers no advantage of its competitors and yet sells for twice the price would never even get on the market,” Peter Bach, an oncologist at Sloan-Kettering Memorial Hospital, wrote in a New York Times op-ed. “But that is not how things work for drugs. The Food and Drug Administration approves drugs if they are shown to be ‘safe and effective.’ It does not consider what the relative costs might be.”

by Sarah Kliff, Vox | Read more:

Image: uncredited

How the Internet Unleashed a Burst of Cartooning Creativity

In 2012 he is finally getting his way. As the newspaper industry continues its decline, the funnies pages have decoupled from print. Instead of working for huge syndicates, or for censored newspapers with touchy editors, cartoonists are now free to create whatever they want. Whether it is cutting satire about Chinese politics, or a simple joke about being a dog, everything can win an audience on the internet.

This burst of new life comes as cartoons seemed to be in terminal decline. Punch, once a fierce political satire magazine whose cartoons feature in almost every British history textbook, finally closed its doors in 2002. The edgier Viz magazine, which sold a million copies an issue in the early 1990s, now sells 65,000. In the United States, of the sprawling EC Comics stable, only Mad magazine remains, its circulation down from 2.1m in 1974 to 180,000. Meanwhile, the American newspaper industry, home of the cartoon strip, now makes less in advertising revenue than at any time since the 1950s. (...)

Triumph of the nerds

The decline of newspapers and the rise of the internet have broken that system. Newspapers no longer have the money to pay big bucks to cartoonists, and the web means anybody can get published. Cartoonists who want to make their name no longer send sketches to syndicates or approach newspapers: they simply set up websites and spread the word on Twitter and Facebook. Randall Munroe, the creator of “XKCD”, left a job at NASA to write his stick men strip, full of science and technology jokes (see above and below). Kate Beaton, a Canadian artist who draws “Hark, A Vagrant”, sketched her cartoons between shifts while working in a museum. Matthew Inman created his comic “The Oatmeal” by accident while trying to promote a dating website he built to escape his job as a computer coder.

The typical format for a web comic was established a decade or more ago, says Zach Weiner, the writer of “Saturday Morning Breakfast Cereal”, or “SMBC” (below). It has not changed much since. Most cartoonists update on a regular basis — daily, or every other day — and run in sequence. “I think that’s purely because that’s what the old newspapers used to do,” says Mr Weiner. But whereas many newspaper comics tried to appeal to as many people as possible, often with lame, fairly universal jokes, online cartoonists are free to be experimental, in both content and form.

Ryan North uses the same drawing every day for his “Dinosaur Comics” — the joke is in the dialogue, which he writes fresh every weekday, and the absurdity of dinosaurs discussing Shakespeare and dating. “SMBC” flicks between one-panel gags and extremely long, elaborate stories. Fred Gallagher, the writer of “Megatokyo”, has created an entire soap-opera-like world, drawn in beautiful Japanese manga-style, accessible only to those who follow the sage regularly. Mr Munroe’s “XKCD” is usually a simple strip comic, but recently featured one explorable comic, entitled “Click and Drag”, which, if printed at high resolution, would be 46 feet wide.

Perhaps thanks to the technical skills needed to succeed, web cartoonists tend to be young — few are over 30 — well-educated and extremely geeky.

The decline of newspapers and the rise of the internet have broken that system. Newspapers no longer have the money to pay big bucks to cartoonists, and the web means anybody can get published. Cartoonists who want to make their name no longer send sketches to syndicates or approach newspapers: they simply set up websites and spread the word on Twitter and Facebook. Randall Munroe, the creator of “XKCD”, left a job at NASA to write his stick men strip, full of science and technology jokes (see above and below). Kate Beaton, a Canadian artist who draws “Hark, A Vagrant”, sketched her cartoons between shifts while working in a museum. Matthew Inman created his comic “The Oatmeal” by accident while trying to promote a dating website he built to escape his job as a computer coder.

The typical format for a web comic was established a decade or more ago, says Zach Weiner, the writer of “Saturday Morning Breakfast Cereal”, or “SMBC” (below). It has not changed much since. Most cartoonists update on a regular basis — daily, or every other day — and run in sequence. “I think that’s purely because that’s what the old newspapers used to do,” says Mr Weiner. But whereas many newspaper comics tried to appeal to as many people as possible, often with lame, fairly universal jokes, online cartoonists are free to be experimental, in both content and form.

Ryan North uses the same drawing every day for his “Dinosaur Comics” — the joke is in the dialogue, which he writes fresh every weekday, and the absurdity of dinosaurs discussing Shakespeare and dating. “SMBC” flicks between one-panel gags and extremely long, elaborate stories. Fred Gallagher, the writer of “Megatokyo”, has created an entire soap-opera-like world, drawn in beautiful Japanese manga-style, accessible only to those who follow the sage regularly. Mr Munroe’s “XKCD” is usually a simple strip comic, but recently featured one explorable comic, entitled “Click and Drag”, which, if printed at high resolution, would be 46 feet wide.

Perhaps thanks to the technical skills needed to succeed, web cartoonists tend to be young — few are over 30 — well-educated and extremely geeky.

by The Economist, Medium | Read more:

Image: XKCD

Plum Crazy

I arrive at the SeaShell Motel in Naples around midnight. After an unexpected credit-shaming at the Budget rental car counter in the Fort Lauderdale airport, I’ve hauled ass through the Big Cypress Swamp in a downpour, enduring a static-ridden NPR station and the onset of McDonald’s farts, to find my late check-in instructions aggressively taped to the office door, as if by somebody familiar with Saran-wrapping frat boys to pine trees. I push open the door to my room, recalling one Travelocity commenter’s description of the place: scary at first. But it’s not scary at all; the room is spacious and clean. It’s just that a security light shines in the window like the angel of death all night, making it impossible to sleep without suffocating your face with pillows that another Travelocity commenter accurately described as flat.

In the morning, the receptionist asks, “Did you get your envelope okay? I was so scared it would fall off.” Rather, she yells this to me over an Eastern European couple who are fighting about a botched room reservation, a situation that turns out to be of the husband’s own doing, much like his unbuttoned floral shirt and plaid swim trunks combo. They may be the type of people who go on a beach vacation but never leave the motel pool. Not like myself—I’ve come on a beach vacation to hang out with plant nerds at the International Plumeria Conference.

In the morning, the receptionist asks, “Did you get your envelope okay? I was so scared it would fall off.” Rather, she yells this to me over an Eastern European couple who are fighting about a botched room reservation, a situation that turns out to be of the husband’s own doing, much like his unbuttoned floral shirt and plaid swim trunks combo. They may be the type of people who go on a beach vacation but never leave the motel pool. Not like myself—I’ve come on a beach vacation to hang out with plant nerds at the International Plumeria Conference.

Plumeria, also known as frangipani, is a tropical flowering tree most people associate with Hawaiian leis. The fragrant flowers usually have five petals, and, in the wild, most species of plumeria have white blooms with a yellow center. In nurseries and backyards, though, flowers of the species Plumeria rubra vary in color, size, and scent, with growers giving them fanciful names such as Fruit Salad and Vera Cruz Rose. A catalog of blooms—the industry leader is Jungle Jack’s out of San Diego County—might sound like a strip club roster if heard out of context: Essence, Temptation, Fantasia, Xquisite, Mystique. The plants are native to Mexico, South America, and the Caribbean, and weren’t brought to Hawaii until the 1860s, about two hundred years after they were first classified by the French botanist Charles Plumier, the original plumeria addict.

The Plumeria Society of America was founded in Houston in 1979 by three women who aimed to spread interest in the plant, then familiar only to those who’d vacationed in Hawaii. One of the women was a famous singer named Nancy Ames, but it was another, Elizabeth Thornton, the Queen of Plumeria, who was known for her breathtaking hybrids like Texas Aggie and Thornton’s Lemon Drop.

Plumeria rubra alone now consists of close to four thousand cultivars (when PSA registration began in 1989 there were just fifty-one). Celadine is commonplace in many cemeteries, hence its nickname: Graveyard Yellow. There is no such thing as a blue plumeria, or a green or a black, though people keep buying color frauds on Amazon and eBay. Depending on whom you ask, there are now legit purples: the Metallica, the Purple Jack. There are reds that turn almost black in intense, scorching heat: Black Widow, Black Tiger. There is a bloom called Plum Crazy, a deep purple and red with upturned edges and slithery, eel-like veins, devastatingly beautiful. In the mid-aughts famed grower Jim Little released a vibrant orange-gold plumeria in honor of Don Ho. It is said that Thornton, a University of Texas grad, spent her lifetime hoping to cultivate a burnt-orange bloom from seed, but she never did.

While researching her best seller The Orchid Thief, Susan Orlean came upon plumerias in South Florida but didn’t know them by name: “Along the path there were enormous tropical trees with pimply bark and flowers the color of bubble gum, the kind of trees you would draw in a tropical cartoon.” Trees for perpetual adolescence. Trees for people like me.

The International Plumeria Conference takes place every ten years. The last time it was held—the inaugural convention, in Galveston—I was twenty-five and my experience with houseplants ran toward half-dead crotons and dank nightstand weed. My dad got me into plumeria. He’s an old surfer with a dozen trees in Satellite Beach, Florida, including a light pink bloom that he keeps calling Surfqueeny after my first AOL screen name. The Plumeria Society of America would identify it as a NOID—pronounced like the Domino’s mascot of yore and simply meaning “no ID,” origin unknown. Dad gave me a Kauka Wilder variety when I left grad school in North Carolina for New Orleans nine years ago. I did just about everything to kill it. The plant didn’t bloom until it was ten feet tall—the flower like a pop star’s fake nails, with long, narrow petals in hues of bright yellow and fuchsia—an umbrella with a clunky nine-foot handle. These days I have eight plumerias and I consider myself fairly obsessed, which is why I’m here in South Florida this May weekend: to convene with the especially obsessed. (...)

The hills are alive with the sound of plumeria freaks saying I have five of these, and let me tell you, they’re the gift that keeps on giving, or I tried to root this one and it rotted on me. Irish Spring soap is strung from the trees, which Hetty tells us is to prevent deer from eating the flowers. Apparently plumeria are very tasty to certain animals, the American bulldog, for instance. “Mine used to eat the whole dang plant,” Terry says. Plumeria’s many known enemies include wild hogs, fungi, spider mites, and borer beetles. In Australia, there’s an endangered turkey that’s known to dig up and shred the plants to make its enormous sexing heaps.

Dennis, an Aussie grower who pronounces flowers flarers, has brought us all twirlers, a contraption he’s invented consisting of fishing line glued to a tiny toothpick-size stick, which various people on the hill are now using to feel up the insides of the flowers, thereby encouraging the anthers to drop their pollen and produce a seedpod. This is called hand-pollination, and it can create new types of plumeria, since seeds aren’t always true to the mother plant. Cross-pollination is the surest bet for a new type of bloom, but it requires a scalpel and a surgical method first discovered in the 1950s by hybridization pioneer Bill Moragne, who named dozens of cultivars in honor of his family: the Cyndi Moragne, the Edi Moragne, and the crowd-pleasing Jeannie Moragne.

There are trees out here with seedpods already on them, which is something to behold—they resemble giant glossy beans or overripe bananas or anorexic eggplants conjoined at the tip. In the end, though, they all dry out and turn the same crispy brown like a giant dead roach that splits open to reveal a bunch of smaller roachlike seeds inside. It’s kind of gross, but seedlings are the only way to get a new, undiscovered bloom.

“Let’s talk about seeds,” Mike, the emcee, says when we reconvene after lunch. “What’s the best way to store them?”

“Prescription bottle!” the audience answers.

“Yes, we have a lot of those around, don’t we?”

by Gwendolyn Knapp, Oxford American | Read more:

Image: Peter Rowley

In the morning, the receptionist asks, “Did you get your envelope okay? I was so scared it would fall off.” Rather, she yells this to me over an Eastern European couple who are fighting about a botched room reservation, a situation that turns out to be of the husband’s own doing, much like his unbuttoned floral shirt and plaid swim trunks combo. They may be the type of people who go on a beach vacation but never leave the motel pool. Not like myself—I’ve come on a beach vacation to hang out with plant nerds at the International Plumeria Conference.

In the morning, the receptionist asks, “Did you get your envelope okay? I was so scared it would fall off.” Rather, she yells this to me over an Eastern European couple who are fighting about a botched room reservation, a situation that turns out to be of the husband’s own doing, much like his unbuttoned floral shirt and plaid swim trunks combo. They may be the type of people who go on a beach vacation but never leave the motel pool. Not like myself—I’ve come on a beach vacation to hang out with plant nerds at the International Plumeria Conference.Plumeria, also known as frangipani, is a tropical flowering tree most people associate with Hawaiian leis. The fragrant flowers usually have five petals, and, in the wild, most species of plumeria have white blooms with a yellow center. In nurseries and backyards, though, flowers of the species Plumeria rubra vary in color, size, and scent, with growers giving them fanciful names such as Fruit Salad and Vera Cruz Rose. A catalog of blooms—the industry leader is Jungle Jack’s out of San Diego County—might sound like a strip club roster if heard out of context: Essence, Temptation, Fantasia, Xquisite, Mystique. The plants are native to Mexico, South America, and the Caribbean, and weren’t brought to Hawaii until the 1860s, about two hundred years after they were first classified by the French botanist Charles Plumier, the original plumeria addict.

The Plumeria Society of America was founded in Houston in 1979 by three women who aimed to spread interest in the plant, then familiar only to those who’d vacationed in Hawaii. One of the women was a famous singer named Nancy Ames, but it was another, Elizabeth Thornton, the Queen of Plumeria, who was known for her breathtaking hybrids like Texas Aggie and Thornton’s Lemon Drop.

Plumeria rubra alone now consists of close to four thousand cultivars (when PSA registration began in 1989 there were just fifty-one). Celadine is commonplace in many cemeteries, hence its nickname: Graveyard Yellow. There is no such thing as a blue plumeria, or a green or a black, though people keep buying color frauds on Amazon and eBay. Depending on whom you ask, there are now legit purples: the Metallica, the Purple Jack. There are reds that turn almost black in intense, scorching heat: Black Widow, Black Tiger. There is a bloom called Plum Crazy, a deep purple and red with upturned edges and slithery, eel-like veins, devastatingly beautiful. In the mid-aughts famed grower Jim Little released a vibrant orange-gold plumeria in honor of Don Ho. It is said that Thornton, a University of Texas grad, spent her lifetime hoping to cultivate a burnt-orange bloom from seed, but she never did.

While researching her best seller The Orchid Thief, Susan Orlean came upon plumerias in South Florida but didn’t know them by name: “Along the path there were enormous tropical trees with pimply bark and flowers the color of bubble gum, the kind of trees you would draw in a tropical cartoon.” Trees for perpetual adolescence. Trees for people like me.

The International Plumeria Conference takes place every ten years. The last time it was held—the inaugural convention, in Galveston—I was twenty-five and my experience with houseplants ran toward half-dead crotons and dank nightstand weed. My dad got me into plumeria. He’s an old surfer with a dozen trees in Satellite Beach, Florida, including a light pink bloom that he keeps calling Surfqueeny after my first AOL screen name. The Plumeria Society of America would identify it as a NOID—pronounced like the Domino’s mascot of yore and simply meaning “no ID,” origin unknown. Dad gave me a Kauka Wilder variety when I left grad school in North Carolina for New Orleans nine years ago. I did just about everything to kill it. The plant didn’t bloom until it was ten feet tall—the flower like a pop star’s fake nails, with long, narrow petals in hues of bright yellow and fuchsia—an umbrella with a clunky nine-foot handle. These days I have eight plumerias and I consider myself fairly obsessed, which is why I’m here in South Florida this May weekend: to convene with the especially obsessed. (...)

The hills are alive with the sound of plumeria freaks saying I have five of these, and let me tell you, they’re the gift that keeps on giving, or I tried to root this one and it rotted on me. Irish Spring soap is strung from the trees, which Hetty tells us is to prevent deer from eating the flowers. Apparently plumeria are very tasty to certain animals, the American bulldog, for instance. “Mine used to eat the whole dang plant,” Terry says. Plumeria’s many known enemies include wild hogs, fungi, spider mites, and borer beetles. In Australia, there’s an endangered turkey that’s known to dig up and shred the plants to make its enormous sexing heaps.

Dennis, an Aussie grower who pronounces flowers flarers, has brought us all twirlers, a contraption he’s invented consisting of fishing line glued to a tiny toothpick-size stick, which various people on the hill are now using to feel up the insides of the flowers, thereby encouraging the anthers to drop their pollen and produce a seedpod. This is called hand-pollination, and it can create new types of plumeria, since seeds aren’t always true to the mother plant. Cross-pollination is the surest bet for a new type of bloom, but it requires a scalpel and a surgical method first discovered in the 1950s by hybridization pioneer Bill Moragne, who named dozens of cultivars in honor of his family: the Cyndi Moragne, the Edi Moragne, and the crowd-pleasing Jeannie Moragne.

There are trees out here with seedpods already on them, which is something to behold—they resemble giant glossy beans or overripe bananas or anorexic eggplants conjoined at the tip. In the end, though, they all dry out and turn the same crispy brown like a giant dead roach that splits open to reveal a bunch of smaller roachlike seeds inside. It’s kind of gross, but seedlings are the only way to get a new, undiscovered bloom.

“Let’s talk about seeds,” Mike, the emcee, says when we reconvene after lunch. “What’s the best way to store them?”

“Prescription bottle!” the audience answers.

“Yes, we have a lot of those around, don’t we?”

by Gwendolyn Knapp, Oxford American | Read more:

Image: Peter Rowley

What North Korean Defectors Think of North Korea

[ed. Fascinating. If you're at all curious about what life is like in North Korea, take a moment to watch this. I'd also recommend reading The Orphan Master's Son by Adam Johnson.

Tuesday, December 6, 2016

Amazon Plans to *Disrupt* the Bodega Industry with "Amazon Go"

Thank GOD. I was just about to write a 20,000-word thinkin' piece about how dangerously close human beings have been getting to their food sources lately. But luckily, Seattle's own Amazon dot com has stepped in and saved me from my task. Today, the mega-retailer announced the launch of Amazon Go, a convenience store designed to end the thousand inconveniences of convenience stores.

Forget for a moment that weird patriarchy-perpetuating cupcake scene and just repeat the following words to yourself: "Computer vision. Deep Learning Algorithms. Sensor fusion."

With this "just walk out" technology, Amazon is poised to remove the "service" from service industries, and it can't happen fast enough for this on-the-go guy. Talking to butchers and bakers and the people who work at the deli? UGH. Catching five minutes of a soccer game with the guy who owns the bodega down the street? FUCK THAT. I want my food, I want it wrapped in plastic, I want to pay for it with my phone, and I want all that so I can spend more time crying at my desk.

Speaking of food—what's on offer? The Seattle Times tells us:

The store features ready-to-eat meals and snacks prepared by on-site chefs or local bakeries. There are also essentials such as bread and milk, as well as high-end cheese and chocolate.

Amazon says there will be well-known brands as well as 'special finds we’re excited to introduce to customers.' That includes an 'Amazon Meal Kit,' which contains ingredients needed to make a meal for two in 30 minutesOkay fine. If this convenience store helps tech workers with demanding jobs eat better / more locally, then bully for them, I guess.

But one small thing. If these smartstores or phonemarts or Amazones or whatever really cool name we start calling them begin to proliferate, guess who might be disproportionally inconvenienced? According the Ethnic Business Coalition, immigrants and refugees own 53 percent of the country's grocery stores. And, as the Times notes, "The Bureau of Labor Statistics said in a report this year that cashiers were the second-largest occupation, with 3.5 million employed in the U.S." So, you know. them.

by Rich Smith, The Stranger | Read more:

Image: Amazon

[ed. I think retail salespeople (4.5 mil.) are the most common occupation in the U.S., right before cashiers (3.3 mil), so if this catches on (and no reason to believe it won't) this could actually be a two-fer in terms of wiping out a large segment of the working population. Not to mention self-driving trucks and truck drivers (1.6 mil). Statistics via:]

[ed. I think retail salespeople (4.5 mil.) are the most common occupation in the U.S., right before cashiers (3.3 mil), so if this catches on (and no reason to believe it won't) this could actually be a two-fer in terms of wiping out a large segment of the working population. Not to mention self-driving trucks and truck drivers (1.6 mil). Statistics via:]

A photograph taken during the Apollo 16 mission, of a family portrait placed on the lunar surface. On the back, astronaut Charles “Charlie” Duke wrote, “This is the family of astronaut Charlie Duke from planet Earth who landed on the Moon on April 20, 1972.” The image appeared in The Moon: 1968–1972, published in October by T. Adler Books. Courtesy T. Adler Books and NASA, Johnson Space Center & NASA History Division

via:

Monday, December 5, 2016

Google, Democracy and the Truth About Internet Search

Google is search. It’s the verb, to Google. It’s what we all do, all the time, whenever we want to know anything. We Google it. The site handles at least 63,000 searches a second, 5.5bn a day. Its mission as a company, the one-line overview that has informed the company since its foundation and is still the banner headline on its corporate website today, is to “organise the world’s information and make it universally accessible and useful”. It strives to give you the best, most relevant results. And in this instance the third-best, most relevant result to the search query “are Jews… ” is a link to an article from stormfront.org, a neo-Nazi website. The fifth is a YouTube video: “Why the Jews are Evil. Why we are against them.”

The sixth is from Yahoo Answers: “Why are Jews so evil?” The seventh result is: “Jews are demonic souls from a different world.” And the 10th is from jesus-is-saviour.com: “Judaism is Satanic!”

There’s one result in the 10 that offers a different point of view. It’s a link to a rather dense, scholarly book review from thetabletmag.com, a Jewish magazine, with the unfortunately misleading headline: “Why Literally Everybody In the World Hates Jews.”

There’s one result in the 10 that offers a different point of view. It’s a link to a rather dense, scholarly book review from thetabletmag.com, a Jewish magazine, with the unfortunately misleading headline: “Why Literally Everybody In the World Hates Jews.”

I feel like I’ve fallen down a wormhole, entered some parallel universe where black is white, and good is bad. Though later, I think that perhaps what I’ve actually done is scraped the topsoil off the surface of 2016 and found one of the underground springs that has been quietly nurturing it. It’s been there all the time, of course. Just a few keystrokes away… on our laptops, our tablets, our phones. This isn’t a secret Nazi cell lurking in the shadows. It’s hiding in plain sight. (...)

Google isn’t just a search engine, of course. Search was the foundation of the company but that was just the beginning. Alphabet, Google’s parent company, now has the greatest concentration of artificial intelligence experts in the world. It is expanding into healthcare, transportation, energy. It’s able to attract the world’s top computer scientists, physicists and engineers. It’s bought hundreds of start-ups, including Calico, whose stated mission is to “cure death” and DeepMind, which aims to “solve intelligence”.

And 20 years ago it didn’t even exist. When Tony Blair became prime minister, it wasn’t possible to Google him: the search engine had yet to be invented. The company was only founded in 1998 and Facebook didn’t appear until 2004. Google’s founders Sergey Brin and Larry Page are still only 43. Mark Zuckerberg of Facebook is 32. Everything they’ve done, the world they’ve remade, has been done in the blink of an eye.

But it seems the implications about the power and reach of these companies is only now seeping into the public consciousness. I ask Rebecca MacKinnon, director of the Ranking Digital Rights project at the New America Foundation, whether it was the recent furore over fake news that woke people up to the danger of ceding our rights as citizens to corporations. “It’s kind of weird right now,” she says, “because people are finally saying, ‘Gee, Facebook and Google really have a lot of power’ like it’s this big revelation. And it’s like, ‘D’oh.’”

MacKinnon has a particular expertise in how authoritarian governments adapt to the internet and bend it to their purposes. “China and Russia are a cautionary tale for us. I think what happens is that it goes back and forth. So during the Arab spring, it seemed like the good guys were further ahead. And now it seems like the bad guys are. Pro-democracy activists are using the internet more than ever but at the same time, the adversary has gotten so much more skilled.”

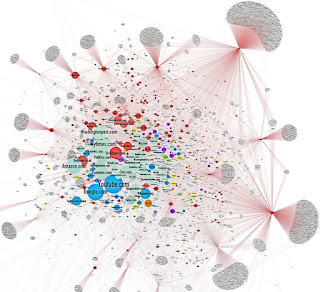

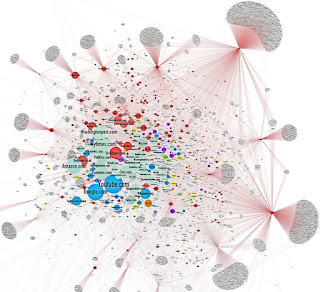

Last week Jonathan Albright, an assistant professor of communications at Elon University in North Carolina, published the first detailed research on how rightwing websites had spread their message. “I took a list of these fake news sites that was circulating, I had an initial list of 306 of them and I used a tool – like the one Google uses – to scrape them for links and then I mapped them. So I looked at where the links went – into YouTube and Facebook, and between each other, millions of them… and I just couldn’t believe what I was seeing.

“They have created a web that is bleeding through on to our web. This isn’t a conspiracy. There isn’t one person who’s created this. It’s a vast system of hundreds of different sites that are using all the same tricks that all websites use. They’re sending out thousands of links to other sites and together this has created a vast satellite system of rightwing news and propaganda that has completely surrounded the mainstream media system.

He found 23,000 pages and 1.3m hyperlinks. “And Facebook is just the amplification device. When you look at it in 3D, it actually looks like a virus. And Facebook was just one of the hosts for the virus that helps it spread faster. You can see the New York Times in there and the Washington Post and then you can see how there’s a vast, vast network surrounding them. The best way of describing it is as an ecosystem. This really goes way beyond individual sites or individual stories. What this map shows is the distribution network and you can see that it’s surrounding and actually choking the mainstream news ecosystem.” (...)

But it’s where it goes from here that’s truly frightening. I ask him how it can be stopped. “I don’t know. I’m not sure it can be. It’s a network. It’s far more powerful than any one actor.”

So, it’s almost got a life of its own? “Yes, and it’s learning. Every day, it’s getting stronger.”

The more people who search for information about Jews, the more people will see links to hate sites, and the more they click on those links (very few people click on to the second page of results) the more traffic the sites will get, the more links they will accrue and the more authoritative they will appear. This is an entirely circular knowledge economy that has only one outcome: an amplification of the message. Jews are evil. Women are evil. Islam must be destroyed. Hitler was one of the good guys.

And the constellation of websites that Albright found – a sort of shadow internet – has another function. More than just spreading rightwing ideology, they are being used to track and monitor and influence anyone who comes across their content. “I scraped the trackers on these sites and I was absolutely dumbfounded. Every time someone likes one of these posts on Facebook or visits one of these websites, the scripts are then following you around the web. And this enables data-mining and influencing companies like Cambridge Analytica to precisely target individuals, to follow them around the web, and to send them highly personalised political messages. This is a propaganda machine. It’s targeting people individually to recruit them to an idea. It’s a level of social engineering that I’ve never seen before. They’re capturing people and then keeping them on an emotional leash and never letting them go.”

by Carole Cadwalladr, The Guardian | Read more:

Image:Jonathan Albright

The sixth is from Yahoo Answers: “Why are Jews so evil?” The seventh result is: “Jews are demonic souls from a different world.” And the 10th is from jesus-is-saviour.com: “Judaism is Satanic!”

There’s one result in the 10 that offers a different point of view. It’s a link to a rather dense, scholarly book review from thetabletmag.com, a Jewish magazine, with the unfortunately misleading headline: “Why Literally Everybody In the World Hates Jews.”

There’s one result in the 10 that offers a different point of view. It’s a link to a rather dense, scholarly book review from thetabletmag.com, a Jewish magazine, with the unfortunately misleading headline: “Why Literally Everybody In the World Hates Jews.”I feel like I’ve fallen down a wormhole, entered some parallel universe where black is white, and good is bad. Though later, I think that perhaps what I’ve actually done is scraped the topsoil off the surface of 2016 and found one of the underground springs that has been quietly nurturing it. It’s been there all the time, of course. Just a few keystrokes away… on our laptops, our tablets, our phones. This isn’t a secret Nazi cell lurking in the shadows. It’s hiding in plain sight. (...)

Google isn’t just a search engine, of course. Search was the foundation of the company but that was just the beginning. Alphabet, Google’s parent company, now has the greatest concentration of artificial intelligence experts in the world. It is expanding into healthcare, transportation, energy. It’s able to attract the world’s top computer scientists, physicists and engineers. It’s bought hundreds of start-ups, including Calico, whose stated mission is to “cure death” and DeepMind, which aims to “solve intelligence”.

And 20 years ago it didn’t even exist. When Tony Blair became prime minister, it wasn’t possible to Google him: the search engine had yet to be invented. The company was only founded in 1998 and Facebook didn’t appear until 2004. Google’s founders Sergey Brin and Larry Page are still only 43. Mark Zuckerberg of Facebook is 32. Everything they’ve done, the world they’ve remade, has been done in the blink of an eye.

But it seems the implications about the power and reach of these companies is only now seeping into the public consciousness. I ask Rebecca MacKinnon, director of the Ranking Digital Rights project at the New America Foundation, whether it was the recent furore over fake news that woke people up to the danger of ceding our rights as citizens to corporations. “It’s kind of weird right now,” she says, “because people are finally saying, ‘Gee, Facebook and Google really have a lot of power’ like it’s this big revelation. And it’s like, ‘D’oh.’”

MacKinnon has a particular expertise in how authoritarian governments adapt to the internet and bend it to their purposes. “China and Russia are a cautionary tale for us. I think what happens is that it goes back and forth. So during the Arab spring, it seemed like the good guys were further ahead. And now it seems like the bad guys are. Pro-democracy activists are using the internet more than ever but at the same time, the adversary has gotten so much more skilled.”

Last week Jonathan Albright, an assistant professor of communications at Elon University in North Carolina, published the first detailed research on how rightwing websites had spread their message. “I took a list of these fake news sites that was circulating, I had an initial list of 306 of them and I used a tool – like the one Google uses – to scrape them for links and then I mapped them. So I looked at where the links went – into YouTube and Facebook, and between each other, millions of them… and I just couldn’t believe what I was seeing.

“They have created a web that is bleeding through on to our web. This isn’t a conspiracy. There isn’t one person who’s created this. It’s a vast system of hundreds of different sites that are using all the same tricks that all websites use. They’re sending out thousands of links to other sites and together this has created a vast satellite system of rightwing news and propaganda that has completely surrounded the mainstream media system.

He found 23,000 pages and 1.3m hyperlinks. “And Facebook is just the amplification device. When you look at it in 3D, it actually looks like a virus. And Facebook was just one of the hosts for the virus that helps it spread faster. You can see the New York Times in there and the Washington Post and then you can see how there’s a vast, vast network surrounding them. The best way of describing it is as an ecosystem. This really goes way beyond individual sites or individual stories. What this map shows is the distribution network and you can see that it’s surrounding and actually choking the mainstream news ecosystem.” (...)

But it’s where it goes from here that’s truly frightening. I ask him how it can be stopped. “I don’t know. I’m not sure it can be. It’s a network. It’s far more powerful than any one actor.”

So, it’s almost got a life of its own? “Yes, and it’s learning. Every day, it’s getting stronger.”

The more people who search for information about Jews, the more people will see links to hate sites, and the more they click on those links (very few people click on to the second page of results) the more traffic the sites will get, the more links they will accrue and the more authoritative they will appear. This is an entirely circular knowledge economy that has only one outcome: an amplification of the message. Jews are evil. Women are evil. Islam must be destroyed. Hitler was one of the good guys.

And the constellation of websites that Albright found – a sort of shadow internet – has another function. More than just spreading rightwing ideology, they are being used to track and monitor and influence anyone who comes across their content. “I scraped the trackers on these sites and I was absolutely dumbfounded. Every time someone likes one of these posts on Facebook or visits one of these websites, the scripts are then following you around the web. And this enables data-mining and influencing companies like Cambridge Analytica to precisely target individuals, to follow them around the web, and to send them highly personalised political messages. This is a propaganda machine. It’s targeting people individually to recruit them to an idea. It’s a level of social engineering that I’ve never seen before. They’re capturing people and then keeping them on an emotional leash and never letting them go.”

by Carole Cadwalladr, The Guardian | Read more:

Image:Jonathan Albright

China Amplifies Warning on Taiwan

[ed. Will someone please take this guy's Twitter account away? It's like giving a kid a box of matches in a room full of gasoline. Twitter bans users for hate speech and terroism threats, why not national security? As I recall, the NSA had serious problems with Obama's beloved blackberry when he took office, so restrictions were imposed (and still are). Now we have this. And, don't even get me started on how this compares to the pearl-clutching over Hillary Clinton's non-secure email servers. See also: Twitter Founder Feels 'Complicated' About Donald Trump's Tweeting.]

China warned President-elect Donald J. Trump on Monday that he was risking a confrontation over Taiwan, even as Mr. Trump broadened the dispute with new messages on Twitter challenging Beijing’s trade policies and military activities in the South China Sea.

A front-page editorial in the overseas edition of People’s Daily, the official organ of the Communist Party of China, denounced Mr. Trump for speaking Friday with Taiwan’s president, Tsai Ing-wen, warning that “creating troubles for the China-U.S. relationship is creating troubles for the U.S. itself.” The rebuke was much tougher than the Chinese Foreign Ministry’s initial response to the phone call, which broke with decades of American diplomatic practice.

A front-page editorial in the overseas edition of People’s Daily, the official organ of the Communist Party of China, denounced Mr. Trump for speaking Friday with Taiwan’s president, Tsai Ing-wen, warning that “creating troubles for the China-U.S. relationship is creating troubles for the U.S. itself.” The rebuke was much tougher than the Chinese Foreign Ministry’s initial response to the phone call, which broke with decades of American diplomatic practice.

For his part, Mr. Trump seemed to take umbrage at the idea that he needed China’s approval to speak with Ms. Tsai. In two posts on Twitter, he wrote: “Did China ask us if it was O.K. to devalue their currency (making it hard for our companies to compete), heavily tax our products going into their country (the U.S. doesn’t tax them) or to build a massive military complex in the middle of the South China Sea? I don’t think so!” (...)

The Chinese government’s initial reaction to Mr. Trump’s call has already faced a torrent of criticism on social media from Chinese who complained it was not tough enough. The statement from Foreign Minister Wang Yi, which was relatively low-key given the unprecedented nature of the call, refrained from criticizing Mr. Trump, instead accusing Taiwan of playing a “little trick” on the American president-elect.

That offered Mr. Trump a face-saving way out of the imbroglio, and a chance to de-escalate. But the messages he posted on Twitter late Sunday stepped up the pressure on China’s leaders instead.

China warned President-elect Donald J. Trump on Monday that he was risking a confrontation over Taiwan, even as Mr. Trump broadened the dispute with new messages on Twitter challenging Beijing’s trade policies and military activities in the South China Sea.

A front-page editorial in the overseas edition of People’s Daily, the official organ of the Communist Party of China, denounced Mr. Trump for speaking Friday with Taiwan’s president, Tsai Ing-wen, warning that “creating troubles for the China-U.S. relationship is creating troubles for the U.S. itself.” The rebuke was much tougher than the Chinese Foreign Ministry’s initial response to the phone call, which broke with decades of American diplomatic practice.

A front-page editorial in the overseas edition of People’s Daily, the official organ of the Communist Party of China, denounced Mr. Trump for speaking Friday with Taiwan’s president, Tsai Ing-wen, warning that “creating troubles for the China-U.S. relationship is creating troubles for the U.S. itself.” The rebuke was much tougher than the Chinese Foreign Ministry’s initial response to the phone call, which broke with decades of American diplomatic practice.For his part, Mr. Trump seemed to take umbrage at the idea that he needed China’s approval to speak with Ms. Tsai. In two posts on Twitter, he wrote: “Did China ask us if it was O.K. to devalue their currency (making it hard for our companies to compete), heavily tax our products going into their country (the U.S. doesn’t tax them) or to build a massive military complex in the middle of the South China Sea? I don’t think so!” (...)

The Chinese government’s initial reaction to Mr. Trump’s call has already faced a torrent of criticism on social media from Chinese who complained it was not tough enough. The statement from Foreign Minister Wang Yi, which was relatively low-key given the unprecedented nature of the call, refrained from criticizing Mr. Trump, instead accusing Taiwan of playing a “little trick” on the American president-elect.

That offered Mr. Trump a face-saving way out of the imbroglio, and a chance to de-escalate. But the messages he posted on Twitter late Sunday stepped up the pressure on China’s leaders instead.

by Jane Perlez, NY Times | Read more:

Image: USGS/Getty

Immune System, Unleashed by Cancer Therapies, Can Attack Organs

As Chuck Peal lay in a Waterbury, Conn., emergency room one Sunday in early September, doctors furiously tried to make sense of his symptoms. Mr. Peal, 61, appeared to be dying, and they were not sure why.

He slipped in and out of consciousness, his blood pressure plummeted, his potassium levels soared and his blood sugar spiked to 10 times the normal level. A doctor suspected a heart attack, but uncertainty left him urgently researching the situation on his phone.

This was not a heart attack. Mr. Peal’s body was attacking itself, a severe reaction by his immune system that was a side effect of a seemingly miraculous cancer treatment aimed at saving his life.

This was not a heart attack. Mr. Peal’s body was attacking itself, a severe reaction by his immune system that was a side effect of a seemingly miraculous cancer treatment aimed at saving his life.

In the seven weeks prior, doctors at Yale had combated Mr. Peal’s melanoma with two of the most promising drugs in cancer treatment today. These medicines work by stimulating the immune system to attack cancer as ferociously as it does other threats, like viruses and bacteria.

These so-called immunotherapy drugs have been hailed as a breakthrough in cancer treatment, attracting billions of research dollars and offering new hope to patients out of options. But as their use grows, doctors are finding that they pose serious risks that stem from the very thing that makes them effective. An unleashed immune system can attack healthy, vital organs: notably the bowel, the liver and the lungs, but also the kidneys, the adrenal and pituitary glands, the pancreas and, in rare cases, the heart.